Top 10 Privacy Enhancing Technologies & Use Cases in 2024

Though data privacy legislation such as GDPR in the EU and CCPA in California are meant to prevent privacy breaches, consumer’s privacy is frequently invaded by hackers, companies and governments.

Data leakages increase as businesses share consumers’ data with third-party companies in order to increase network visibility. Privacy enhancing technologies (PETs) allow businesses to leverage the increasing amount of data while ensuring personal or sensitive information stays private. Thus, improve corporate reputation and compliance.

In this article, we introduce the top 10 PETs and their use cases to show executives how they can adapt PETs to enhance their businesses.

To leverage an automated tool, here is a list of the top data loss prevention software.

What are privacy-enhancing technologies (PETs)?

Privacy-enhancing technologies (PETs) are a broad range of technologies (hardware or software solutions) that are designed to extract data value to unleash its full commercial, scientific and social potential, without risking the privacy and security of this information.

Why are privacy-enhancing technologies (PETs) important now?

Like any other data privacy solution, privacy-enhancing technologies are important due to three reasons for businesses:

- Data protection laws such as GDPR and CCPA are forcing organizations to preserve consumer data. Businesses can pay serious fines because of data breaches. These fees are already being levied, according to DLA Piper GDPR Data Breach Survey 2022, GDPR fines are over US$1.2 billion from January 2021 to January 2022.

- Data may need to be tested by third-party organizations due to the lack of your business’ self-sufficiency in analytics and application testing. PETs enable privacy protection while data sharing.

- Privacy breaches can harm your business’ reputation, businesses or customers (depending on your business model) may want to stop interacting with your brand. An example is the share price loss of Facebook after Cambridge Analytica scandal.

Top 10 privacy-enhancing technology examples?

Cryptographic algorithms

- Homomorphic Encryption: Homomorphic encryption is an encryption method that enables computational operations on encrypted data. It generates an encrypted result which, when decrypted, matches the result of the operations as if they had been performed on unencrypted data (i.e. plaintext). This enables encrypted data to be transfered, analyzed and returned to the data owner who can decrypt the information and view the results on the original data. Therefore, companies can share sensitive data with third parties for analysis purposes. It is also useful in applications that hold encrypted data in cloud storage. Some common types of homomorphic encryption are:

- Partial homomorphic encryption: can perform one type of operation on encrypted data, such as only additions or only multiplications but not both.

- Somewhat homomorphic encryption: can perform more than one type of operation (e.g. addition, multiplication) but enables a limited number of operations.

- Fully homomorphic encryption: can perform more than one type of operation and there is no restriction on the number of operations performed.

- Secure multi-party computation (SMPC): Secure multi-party computation is a subfield of homomorphic encryption with one difference: users are able to compute values from multiple encrypted data sources. Therefore, machine learning models can be applied to encrypted data since SMPC is used for a larger volume of data.

- Differential privacy: Differential privacy protects from sharing any information about individuals. This cryptographic algorithm adds a “statistical noise” layer to the dataset which enables to describe patterns of groups within the dataset while maintaining the privacy of individuals.

- Zero-knowledge proofs (ZKP): Zero-knowledge proofs uses a set of cryptographic algorithms that allow information to be validated without revealing data that proves it.

Data masking techniques

Some privacy enhancing technologies are also data masking techniques that are used by businesses to protect sensitive information in their data sets.

- 5. Obfuscation: This one is a general term for data masking that contains multiple methods to replace sensitive information by adding distracting or misleading data to a log or profile.

- 6. Pseudonymization: Identifier fields (fields that contain information specific to an individual) are replaced with fictitious data such as characters or other data. Pseudonymization is frequently used by businesses to comply with GDPR.

- 7. Data minimisation: Collecting minimum amount of personal data that enables the business to provide the elements of a service.

- 8. Communication anonymizers: Anonymizers replace online identity (IP address, email address) with disposal/one-time untraceable identity.

With the help of AI & ML algorithms

- 9. Synthetic data generation: Synthetic data is an artificially created data by using different algorithms including ML algorithms. If you are interested in privacy-enhancing technologies because you need to transform your data into a testing environment where third-party users have access, generating synthetic data that has the same statistical characteristics is a better option.

- 10. Federated learning: This is a machine learning technique that trains an algorithm across multiple decentralized edge devices or servers holding local data samples, without exchanging them. With the decentralization of servers, users can also achieve data minimization by reducing the amount of data that must be retained on a centralized server or in cloud storage.

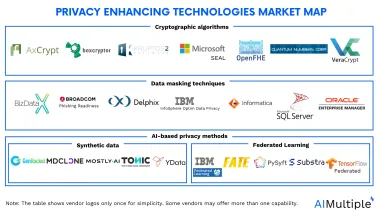

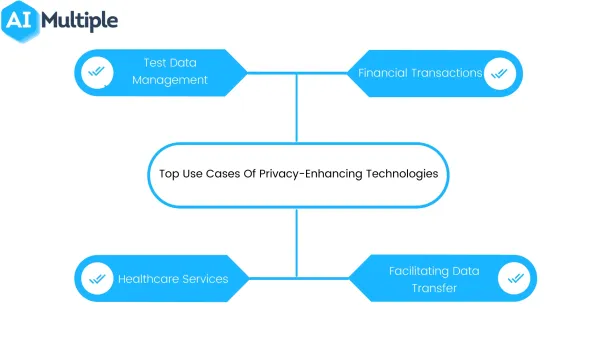

PETs market overview

The PETs market encompasses a diverse array of tools, models, and libraries designed to safeguard data privacy. For instance, each category, such as synthetic data generators or data masking tools, boasts over 20 distinct tools.

These tools are challenging to shortlist individually due to their vast diversity. To enhance clarity, we’ve grouped them, providing a comprehensive overview on the cover image above.

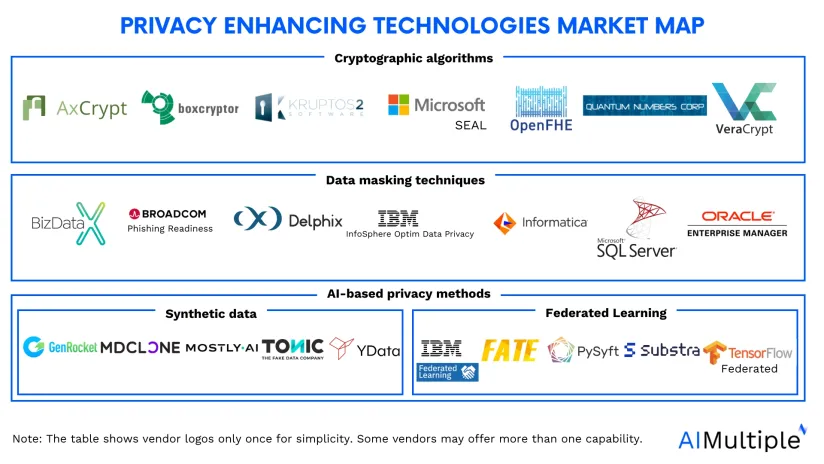

What are the top use cases of PETs?

- Test data management: Application testing and data analysis are sometimes handled by third-party providers. Even when they are handled in-house, companies should minimize internal access to customer data. Using a suitable PET that doesn’t significantly affect test results is important for organizations.

- Financial transactions: Financial institutions are responsible for protecting the privacy of the customers due to citizens’ freedom to conduct private deals and transactions with other parties.

- Healthcare services: Healthcare industry collects and shares (when needed) electronic health records (EHR) of patients. For example, clinical data can be used for searching for adverse effects of various drug combinations. Healthcare companies ensure the privacy of patients’ data in such cases by using PETs.

- Facilitating data transfer between multiple parties including intermediaries: For businesses that work as a middle man between two parties, the usage of PETs is crucial since these businesses are responsible for protecting the privacy of both parties’ information.

Choosing the right privacy-enhancing tool for your business

Navigating the array of privacy-enhancing tools (PETs) in the market requires a strategic approach tailored to your unique business needs. To ensure optimal integration and alignment with your software stack and IT infrastructure, consider the following steps:

1. Identify your needs and goals

You must identify issues you aim to solve by deploying a PET. To do this you may:

a.) Assess your data landscape: Identify the volume and nature of the data your business manages. Determine if it is predominantly structured or unstructured, as this influences the choice of PETs that best suit your requirements.

b.) Map third-party data sharing: Understand the intricacies of third-party data sharing. If your data traverses external channels, prioritize solutions like homomorphic encryption to maintain security and confidentiality during transit.

c.) Define data access needs:

Clearly distinguish the level of access required to the dataset—assessing whether full access is essential or if accessing only the result/output suffices. Additionally, consider the ability to obfuscate personally identifiable information for enhanced privacy.

d.)Determine data utilization: Check you aim to use data for statistical analysis, market insights, machine learning model training, or similar purposes.

2. Evaluate different types of PETs:

Consider the three main categories of PETs—cryptographic tools, data masking techniques, and AI-based solutions like synthetic data generators. Identify which type aligns best with your privacy objectives and data protection needs.

3. Shortlist tools based on categories:

Once you’ve identified the PET categories relevant to your needs, shortlist specific tools within each category. Consider aspects such as functionality, scalability, and compatibility with your existing infrastructure.

4. Evaluate IT infrastructure:

Conduct a thorough evaluation of your IT infrastructure, taking into account network and computational capabilities. This assessment will guide you in selecting PETs that seamlessly integrate with your enterprise resources. Identify areas that may require upgrades for compatibility.

5. Consider budgetary allocations:

Be proactive in budget planning, recognizing that PETs can vary in cost. Allocate resources based on your specific privacy requirements and financial capacity. Consider factors such as scalability, maintenance, and potential additional costs associated with the chosen PET solution.

Don’t forget to check our article on data security best practices. If you still have questions about privacy-enhancing technologies, we would like to help:

Sources:

Cem has been the principal analyst at AIMultiple since 2017. AIMultiple informs hundreds of thousands of businesses (as per similarWeb) including 60% of Fortune 500 every month.

Cem's work has been cited by leading global publications including Business Insider, Forbes, Washington Post, global firms like Deloitte, HPE, NGOs like World Economic Forum and supranational organizations like European Commission. You can see more reputable companies and media that referenced AIMultiple.

Throughout his career, Cem served as a tech consultant, tech buyer and tech entrepreneur. He advised businesses on their enterprise software, automation, cloud, AI / ML and other technology related decisions at McKinsey & Company and Altman Solon for more than a decade. He also published a McKinsey report on digitalization.

He led technology strategy and procurement of a telco while reporting to the CEO. He has also led commercial growth of deep tech company Hypatos that reached a 7 digit annual recurring revenue and a 9 digit valuation from 0 within 2 years. Cem's work in Hypatos was covered by leading technology publications like TechCrunch and Business Insider.

Cem regularly speaks at international technology conferences. He graduated from Bogazici University as a computer engineer and holds an MBA from Columbia Business School.

To stay up-to-date on B2B tech & accelerate your enterprise:

Follow on

Comments

Your email address will not be published. All fields are required.