The first chatbot emerged in the 60s and became commercial in the late 2000s, but it never matched today’s popularity due to ChatGPT. However, its success shouldn’t be generalized, as it’s a specific chatbot type not suitable for all business processes.

If you want to leverage a text-based conversational AI tool in your business processes, and wish to learn the differences between chatgpt vs traditional chatbots, explore how they differ from each other and how to choose between them.

What is a chatbot?

Chatbots are applications that can simulate text-based human conversations with technologies like NLU and NLP. AI chatbots work by leveraging technologies such as Natural Language Processing and machine learning to understand user intent, analyze data, and generate accurate responses.

They can chat with a user in different languages and provide instant and consistent responses without human intervention. AI chatbots learn from user interactions and adapt over time, improving their ability to comprehend complex queries and deliver personalized interactions. This flexibility makes them usable across a wide range of use cases and industries

There are currently three types of chatbots:

Rule-based chatbots

No built-in intelligence or learning capabilities. They can’t generate an original response without relying on predefined templates (as generative chatbots do), nor one based on existing parameters (as AI chatbots do).

They receive an input and try to find the closest possible answer in their database.

AI chatbots

AI chatbots leverage ML models to select the most appropriate response from a set of predefined templates and training dataset. Because most AI chatbots are trained on a specific category of datasets, they likely won’t answer questions that’s not in their domain.

Generative chatbots

Generative chatbots, which include ChatGPT, use a much wider range of data to answer almost any question in any category. A ChatGPT chatbot leverages advanced conversational AI technology to provide more intuitive and human-like communication. This might make them less specialized in any one topic, but they appeal to a larger audience.

How does a chatbot work?

Chatbots are programs designed to engage with humans through human-like interactions. They adhere to the following steps while doing this:

- Receiving user input: This is a text or voice-based message or command from the user.

- Processing input:

- Tokenization: The Input is tokenized into individual words. For example, “How are you?” is tokenized into “How,” “are”, “you”, “?”.

- Intent understanding: The chatbot tries to understand the user’s intent using natural language processing (NLP) and natural language understanding (NLU). They decide if the query is a question, command, or sentiment.

- Entity recognition: The entity or keywords in the input are identified. For example, in “Book a ticket to Paris”, “Paris” is an entity representing a destination.

- Determining the response: The chatbot generates appropriate responses based on their type. In the next sections, we will focus solely on generative chatbots. For more comprehensive information, refer to the article on chatbot types.

- Returning the response: The best-matched response is finally returned to the user.

What’s ChatGPT (Generative Pre-trained Transformer)?

ChatGPT is a chatbot interface built on OpenAI’s generative models. The underlying technology of ChatGPT includes the Transformer architecture, which allows it to process and generate human-like text. While OpenAI’s AI models can be tailored for specific applications, the ChatGPT interface offers user-friendly access for all audiences without requiring coding or API keys. Functioning as a large language model (LLM) chatbot, ChatGPT generates responses based on the data it has been trained on.

Check out ChatGPT’s use cases.

How does ChatGPT work?

ChatGPT is a large language model trained on the third generation of GPT (Generative Pre-trained Transformer) architecture, with hundreds of billions of words.

Here’s a high-level overview of GPT’s functionality:

- It can generatecoherent text sequences

- It’s pre-trained on large swaths of data to gain general language capabilities, and is then fine-tuned for specific tasks.

- It utilizes the Transformer architecture to process inputs. For example, for the query, “What are some traditional dishes in Italy?” this is the breakdown:

- It tokenizes the words

- It embeds a numerical value and a positional encoder to each word to remember their sequence.

- Gives a weight to each word to focus on the different parts of the input differently (i.e., the word “Give” will have less weight than “recommendation”)

- It uses layers of Transformer blocks to understand the context. It sees patterns like “traditional dishes in Italy” and infers you’re asking for suggestions on what to eat.

- It generates a response based on the immediate context of what you’ve asked, and its vast training data (i.e., it’s learnt that “pizza” and “pasta” are foods associated with Italy)

What are the differences between traditional chatbots and ChatGPT?

AI-based and generative chatbots like ChatGPT are conversational agents that automate user interactions. However, there are differences among them.

Architecture and design

- AI chatbots: Leverage ML models to create responses based on the specific data they’re trained with.

- ChatGPT: An advanced language model, built on the Transformer, that generates new responses based on patterns learnt from vast amounts of data.

Flexibility

- AI chatbots are moderately flexible. They can create different kinds of the same answer, but can’t expand beyond their training data.

- ChatGPT can generate responses to many questions since they don’t rely on pre-defined templates.

Training

- AI chatbots are trained on specialized datasets tailored to specific applications or domains. They may require fine-tuning or additional data. They will likely not answer questions outside of their domain. AI chatbots offer depth determined by the training data and their ML algorithms.

- For instance, if trained on data about dogs, they could answer dog-related questions. However, if you asked it to name a different mammal besides dogs, it would likely not respond because the only type of mammal it knows is dogs.

- ChatGPT is trained on more diverse datasets than other AI chatbots, which enables it to possess knowledge across a wide range of topics and generalize original data. This capability is arguably its most considerable appeal to users. ChatGPT offers greater depth than typical AI chatbots and can connect various topics effectively.

Figure 1: ChatGPT connecting laptops to books.

Multimodality

- AI chatbots may have advanced text-based capabilities, but they generally lack multimodality and are restricted to unimodal interactions.

- ChatGPT’s multimodal capabilities allow it to process and generate responses from both text and images. This enables versatile applications such as caption writing, code generation, and drafting alt text.

Personalization

- AI chatbots can make personalized suggestions within their domain. For example, if a chatbot is trained on music data, it can provide tailored recommendations about various music genres.

- ChatGPT‘s personalization is extensive. For instance, if you mention that you enjoy noir films and ask it to recommend noir-style songs, it can create a bridge between the two.

Figure 2: ChatGPT making cross-references between different categories.

Reasoning

Reasoning models can be categorized by complexity and ability to handle context and abstraction.

- o0 reasoning: No reasoning; responses are purely reactive and static.

- Chatbots: Rule-based chatbots operate at this level, responding to predefined keywords.

- ChatGPT: Does not rely on o0 reasoning; instead, it uses dynamic inference to understand context.

- o1 reasoning: Direct, linear reasoning involving single-step logic.

- Chatbots: Limited AI chatbots use this reasoning to address simple queries.

- ChatGPT: Uses o1 reasoning but extends beyond it.

- o2 reasoning: Limited multi-condition reasoning with slightly expanded context.

- Chatbots: Some advanced chatbots may use o2 reasoning for tasks like responding to “If my order is delayed, can I request a refund?”

- ChatGPT: Easily handles o2 reasoning and resolves multi-condition queries, such as analyzing workflow dependencies or user-specific scenarios.

- o3 reasoning: Multi-step or layered reasoning that connects information across conditions.

- Chatbots: Rarely operate at this level due to context retention and logic limitations.

- ChatGPT: Operates in o3 reasoning, connecting relationships and synthesizing multi-step logic.

- o4 reasoning: Reasoning across multiple dimensions or synthesizing diverse inputs.

- Chatbots: Most chatbots cannot perform this level of reasoning, as they cannot integrate diverse knowledge or manage ambiguity.

- ChatGPT: Handles o4 reasoning to manage complex, multi-domain tasks. For example, responding to “Compare renewable energy policies in the U.S. and Germany and explain their impact on global carbon emissions.”

- o5+ reasoning: Meta-reasoning, where systems evaluate their reasoning process or explore alternative solutions.

- Chatbots: No rule-based or basic AI chatbots can operate at this level, as it requires self-reflection and adaptive learning.

- ChatGPT: Can approximate o5 reasoning by assessing its confidence in responses or asking users for clarification.

How do you pick between a traditional AI chatbot and a generative chatbot?

| Best For | Traditional Chatbots | Generative AI Chatbot |

|---|---|---|

| Simple, repetitive tasks | ✅ | |

| Creative, human-like conversations | ✅ | |

| Structured, rule-based interactions | ✅ | |

| Budget-friendly and easy to maintain | ✅ | |

| Context-aware, dynamic responses | ✅ | |

| Advanced infrastructure and customization | ✅ |

You should pick a traditional AI Chatbot if you:

- Need repetitive tasks and predictable user queries, like FAQs, appointment scheduling, or order tracking.

- Prefer consistent, scripted responses instead of dynamic or creative replies to ensure compliance, particularly when the impact of missing subtle context or nuance is minimal and scripted interactions suffice.

- Have a restricted budget or resources and seek a simple, cost-effective solution to implement and maintain.

- Have an infrastructure that cannot handle complex AI models, requiring a lightweight chatbot that integrates effortlessly with your existing systems.

- Desire total control over each user interaction to minimize unpredictability and lessen the necessity for continuous model supervision or fine-tuning.

You should pick a generative chatbot if you:

- Need your chatbot to provide unique and dynamic responses, tailored to each query

- Have a use case that would benefit from creative, human-like responses, instead of something structured and predictable

- Have the infrastructure to maintain and integrate a complex generative AI model

- Can handle the higher costs associated with using advanced generative AI models, especially AI-based solutions

- Are capable of collecting user feedback and fine-tuning the responses the model generates

Creating your own chatbot allows for customization and personalization, ensuring it meets your unique business needs. This approach enhances customer service, sales automation, and ensures the accuracy and relevance of responses provided by these intelligent systems.

How to create your own GPT-powered chatbot?

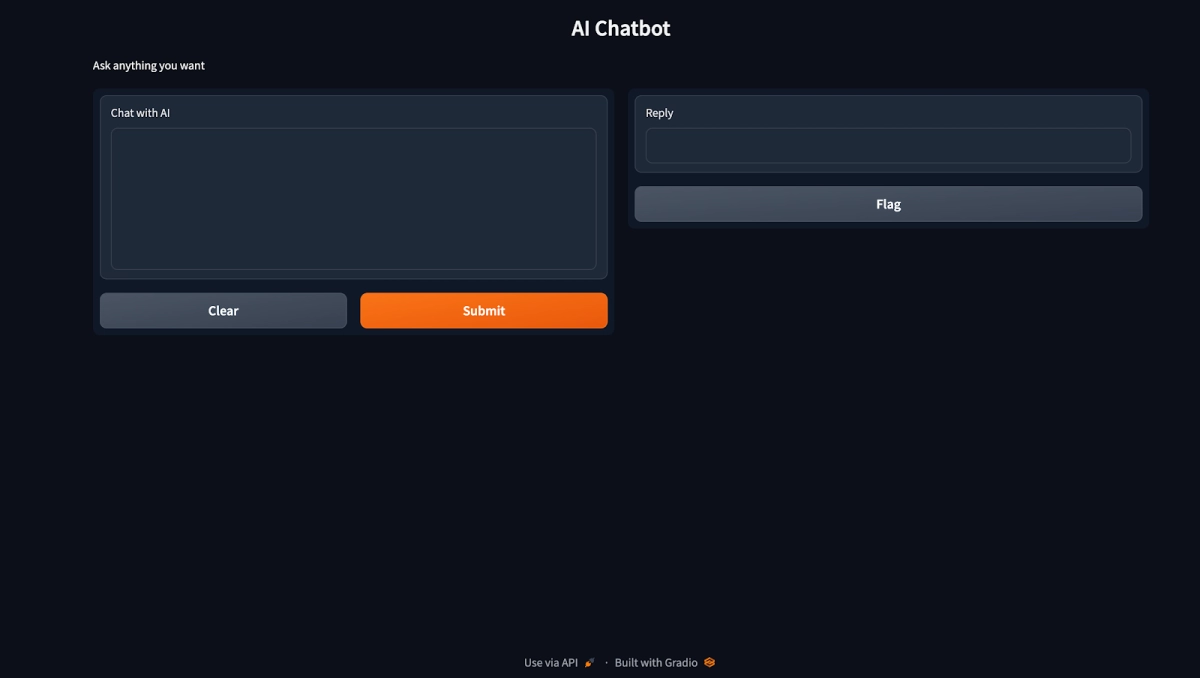

If you’re not ready to buy a chatbot, you can create your own GPT-powered chatbot using ChatGPT’s API on Windows, macOS, or Linux. Here’s how:

Various AI tools, such as Python, OpenAI library, and Gradio, are essential for developing and deploying a GPT chatbot.

Each new account has $5 in credit. When that credit runs out or expires, you’ll need to pay for more.

- Download and install Python.

- Check the Python version by running python –version on Windows or python3 –version on macOS and Linux.

- Upgrade Pip, Python’s package installer. Run python -m pip install -U pip on Windows or python3 -m pip3 install -U pip3 on macOS and Linux.

- Install the OpenAI library. Run pip install openai on Windows or pip3 install openai on macOS and Linux.

- Install Gradio by running pip install gradio. This will set up your chatbot’s interface.

- Download Sublime Text.

- Create an OpenAI account. Go to “View API Keys,” click “Create a Secret Key,” and copy the key.

- Open Sublime Text, enter the following code, and replace “Your API key” with the key you copied.

import openai

import gradio as gr

openai.api_key = "Your API key"

messages = [

{"role": "system", "content": "You are a helpful and kind AI Assistant."},

]

def chatbot(input):

if input:

messages.append({"role": "user", "content": input})

chat = openai.ChatCompletion.create(

model="gpt-3.5-turbo", messages=messages

)

reply = chat.choices[0].message.content

messages.append({"role": "assistant", "content": reply})

return reply

inputs = gr.inputs.Textbox(lines=7, label="Chat with AI")

outputs = gr.outputs.Textbox(label="Reply")

gr.Interface(fn=chatbot, inputs=inputs, outputs=outputs, title="AI Chatbot",

description="Ask anything you want",

theme="compact").launch(share=True)- Save the document as a new file on your Desktop. Append the name you choose with .py

- Go to the downloaded file. On Windows, right click and press “Copy as Path.” On Mac and Linux, just copy the file.

- On Terminal, write Python (on Windows) or Python3 (on Mac or Linux), press space, and paste what you copied in the last step.

- Copy the “Running on local URL” and paste it into your browser.

- You will have your chatbot, built with OpenAI’s Transformer model, leveraging the Gradio user interface.

Figure 3: Gradio’s chatbot UI, which we created.

FAQs

What is the main difference between ChatGPT and traditional chatbots?

The key difference lies in their underlying technology. Traditional chatbots operate on predefined rules and scripted responses, which can limit flexibility during customer interactions. In contrast, ChatGPT uses large language models and natural language processing to generate human-like text and understand context. This allows it to provide more dynamic and natural interactions compared to rule-based chatbots.

Why do AI-powered chatbots like ChatGPT enhance customer experience?

AI chatbots like ChatGPT enhance customer experience through contextual understanding and the ability to generate human-like responses. They adapt to user input and can carry on fluid, nuanced conversations that feel more natural than conventional chatbots. This leads to better customer engagement and satisfaction, especially when handling complex queries. Businesses benefit from a more intuitive interface that aligns with evolving customer preferences.

Can ChatGPT handle complex queries better than rule-based chatbots?

Yes, ChatGPT excels at handling complex queries thanks to its conversational AI capabilities and advanced language understanding. Unlike traditional chatbots, which follow scripted paths, ChatGPT can understand subtle user input and respond with more personalized, human-like text. It can also access a broader knowledge base to provide deeper insights. This makes it highly effective for customer support and resolving nuanced issues.

How do chatbots and ChatGPT support business efficiency?

Both traditional and AI chatbots help streamline business operations by automating repetitive tasks like answering FAQs or scheduling appointments. However, ChatGPT-powered systems go further by offering consistent, context-aware responses that improve the quality of customer interactions. This reduces the workload on human agents and supports 24/7 service. As a result, companies can boost operational efficiency and enhance customer satisfaction simultaneously.

Comments

Your email address will not be published. All fields are required.