Chatbot success is elusive, and claims such as 10 times better ROI compared to email marketing makes sense only if the chatbot is implemented successfully. This article goes into detail about how a combination of pre-launch tests (automated and manual tests) and post-launch A/B testing, customized for chatbots, can help companies build successful chatbots. We will also talk about the other popular testing techniques, such as ad-hoc and speed testing.

What are the tests to complete before launching a chatbot?

Good developers build automated tests for the expected input/output combinations for their code. Similarly, chatbot’s natural understanding capabilities and typical responses need to be tested by the developer. These automated tests ensure that new versions of the chatbot does not introduce new errors.

The three types of tests below (general, domain specific and limit tests) need to be completed and ideally automated before releasing the chatbot. They test the key points of chatbots and would enable a company to pinpoint problems before launching its chatbot. After chatbot launch, they need to be automatically repeated to ensure that the new version does not break existing functionality

General testing

This includes question and answer testing for broad questions that even the simplest chatbot is expected to answer. For example, greeting and welcoming the user are tested.

If the chatbot fails the general test, then other steps of testing wouldn’t make sense. Chatbots are expected to keep the conversation flowing. If they fail at the first stage then, the user will likely to leave the conversation hurting key chatbot metrics such as conversation rate and bounce rate.

Domain-specific testing

The second stage would be testing for the specific product or service group. The language and expressions related to the product will be used to drive the test and ensure that chatbot is able to answer domain specific queries. In case of an e-commerce retailer, that could be queries related to types of shoes for example. An e-commerce chatbot would need to understand that these all lead to the same intent: “cage lady sandal shoe,” “strappy lady sandal shoe,” or “gladiator lady sandal shoe.”

Since it is impossible to capture every specific type of question related to that specific domain, domain specific testing needs to be categorized to ensure that key categories are covered by automated test.

These context related questions will be the ones that drive the consumer to buy the product or the service. Once the greeting part of the conversation is over, the rest of the conversation will be about the service or the product. Therefore, after the initial contact and main conversations, chatbots need to ace this part or attain the maximal correct answer ratio, another key chatbot metric.

Limit testing

The third stage would be testing the limits of our chatbot. For the first two steps, we assumed regular expressions and meaningful sentences. This last step will show us what happens when the user sends irrelevant info and the how the chatbot would handle it. That way, it would be easier to see what happens when the chatbot fails.

Manual testing

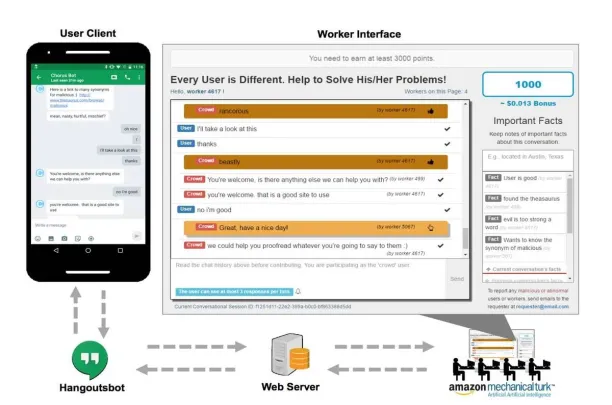

Manual tests do not necessarily mean the team spending hours on testing. Amazon’s Mechanical Turk operates a marketplace for work that requires human intelligence. The Mechanical Turk web service enables companies to programmatically access this marketplace and a diverse, on-demand workforce. Developers can leverage this service to build human intelligence directly into their applications. This service can be used for further testing and reach for a higher confidence interval.

What are things to pay attention in pre-launch testing?

Important aspects include:

Understanding Intent

Chatbots needs to understand what users want and intent classifier is one of the main modules of a chatbot:

Building a flexible intent understanding system requires leveraging machine learning. Machine learning solutions predict intent mostly correctly for the input set and have the capacity to predict intent for cases they never encountered before. Given this imperfect nature of solution, developers should focus on biggest misunderstanding issues and accept errors in edge cases. Of course, as in any machine learning problem, intent prediction can be improved with a larger volume of ground truths (i.e. manually labelled examples).

Conversation flow

Bot should be flexible enough to jump to different points in the task such as changing the delivery address after that address was input by the user. It should also not introduce unnecessary steps and make use of UX elements such as radio buttons which can enable users to communicate more effectively compared to using text alone.

Error handling

There will inevitably be cases where the bot does not understand the input. Bot’s responses need to be tested so it communicates its lack of understanding clearly and helps the user proceed (e.g. by contacting a live agent).

What are post-launch chatbot testing techniques?

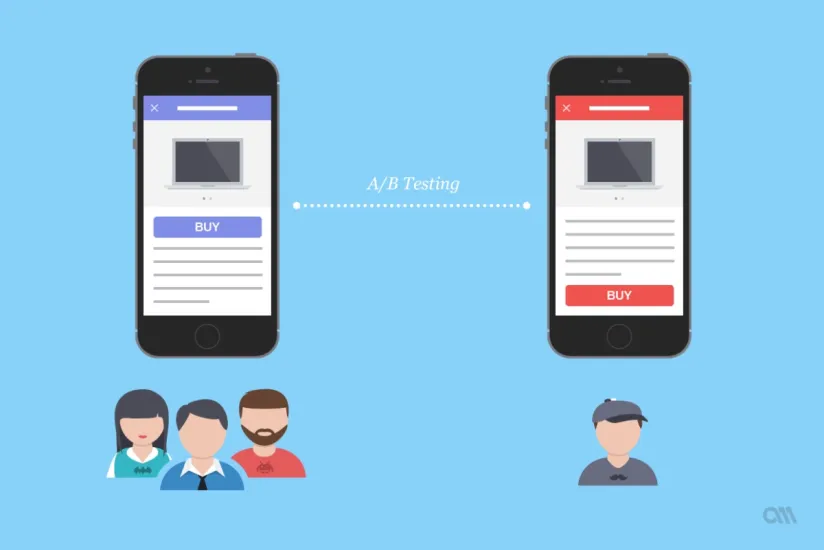

A/B testing is the most flexible way to test various aspects of chatbot functionality once a chatbot has active users.

What is A/B testing?

A/B testing is defined as comparing two versions of a product to see which one performs better. You compare two products by showing the two variants to similar visitors at the same time

Even though it has been used for ages in other fields of marketing. Currently, there are not many A/B testing alternatives available for chatbots.

But basically, it is a way to experiment distinct characteristics of the chatbots, through randomized trials, companies can collect data and decide on which alternative to use. Testing a chatbot is conducted through an automated testing process. This makes it possible to hasten development processes of the chatbot and ensures further quality assurance.

We can dissect the process into two separate test steps of the chatbot design. One is deciding on the visual factors of the chatbot such as the design, color or the location of the chatbot on the web page.

The other factor to decide is the conversational factors, such as the quality and the performance of the algorithm. Those two factors need to be tested for a better user experience.

Conversational Factors

We have previously written an article regarding the key metrics for chatbots. Through A/B testing, the key metrics to follow will likely to remain the same. Retention rates and drop-offs will still be the significant factor in deciding the success of the chatbot. User engagement rates for different chat bot alternatives will still be significant.

One such test is about deciding on how to start the conversation: should the chatbot start with a standard salutation, or use distinct messages such as emoji included messages? This would be the key factor since the customer funnel flows through the first engagement.

If the chatbot is successful in eliciting the desired action which is making the user interact with the chatbot, we are more likely to reach a higher audience and higher conversation flow.

After the initial contact, different alternatives for messaging can be created. This might be achieved through using customer data. Data sources such as user’s search history or location can be a fantastic way to achieve that customization. Different conversational messages can be created, but the order of this message can dramatically affect the hazard rate of the customer.

Since after the first few messages, most chatbots can detect the characteristics and keep the conversation flow. But artificial intelligence can experience problems if the initial contact never occurred.

One such chatbot company is Botanalytics. They provide chatbot analytics tools. Their platform can provide insights and reports such as users’ activity (as a graph and number), conversation activity (as a graph and number), average conversation steps per user, average conversation per user, most common keywords, most active hours, and average session length. Botanalytics provides correlation analysis to bot owners. It is possible to elaborate the details of the test groups and cohorts.

Second is deciding on the formality of the language, the question is to decide on whether using a formal language increases the engagement or not. Depending on the customer or the user profile, this is of crucial importance, multiple responses need to be tested. Therefore, the level of formality will shape the input provided by the user hence making it a rather difficult conversation to process by the artificial intelligence algorithm.

The tradeoff is whether the language of the bot increases and brings a greater return on engagement or not. Since we have the instinct to buy from or engage with the people who share the same characteristics, bot responses will affect this dramatically. Hence the only channel the user experiences the chatbot is through its language. Therefore, return on engagement will be the key metric for that type of A/B testing.

Visual Factors

Design of the chatbot is also important, but this is rather a not-so-technical side of the chatbots. Still, it is a crucial factor for the success of user experience. This can be done by changing the frame color or button color. Basically, this is the part where the firms would utilize the traditional A/B testing procedures.

The effects of visual and conversational factors are needed to be studied further. Currently, there is no data regarding which factor influences more. Separating and deciding on which factor to focus on is still important. But right now, as a whole, A well-structured and engaging UX in messenger chatbots may boost retention rates up to 70%.

For the chatbots, the companies can still utilize the methods mentioned in the survey analytics article. The right experimental design will make it possible the construct the right counterfactual and provide objective results. But the most basic steps for deciding on how to implement a chatbot A/B test are as follows;

7 Steps to chatbot A/B testing

- Choose the platform to conduct the A/B testing

- Analyze the chatbot funnel. Create a list of visual factors to conduct the test.

- Do the same for conversational factors, different algorithms, different structures

- Decide on the test method to use, control for interactions between the factors to be tested, gather as much data as possible

- Compare and analyze the alternatives, if necessary, test for additional factors

- Keep testing, keep it as a dynamic process and achieve higher performance

- Improve your Chatbot and Enjoy!

What are other chatbot testing approaches?

Testing the chatbot’s performance in speed and security

No one likes to wait and one of the major advantages of chatbots is to be able to respond immediately. Testing is required to ensure speedy responses.

Security is critical in any application and the infinite possibilities enabled by text can complicate testing a bot in terms of security features however these are crucial tests for operational bots.

Ad-hoc testing

Many of the key metrics and key test mentioned in this article are broad test categories. It is possible to test further and generate ad-hoc categories and methods, but it is important to note that chatbots are bots.

No matter how hard the people try, at the current stage, chatbots have limits. So, expecting a human-like performance is expecting a god-like performance from a human. It happens every once in a while but doesn’t happen overnight or all the time.

Agile development is still the key to success. Even after the chatbot is released, the process continues. Feedback is the most essential element to shape the chatbot. Real life performance should be monitored closely to keep the chatbot versatile and robust.

For more on chatbot testing

If you are interested in learning more about chatbots, read:

- Top Chatbot Testing Frameworks & Techniques

- The Ultimate Guide Into 20+ Metrics for Chatbot Analytics

- Top chatbot testing frameworks & techniques

Finally, if you believe your business would benefit from a chatbot platform, we have a data-driven list of vendors.

We will help you choose the best one for your business:

Comments

Your email address will not be published. All fields are required.