Compare Top 20+ AI Governance Tools: A Vendor Benchmark in '24

According to AI stats, 90% of commercial apps are expected to leverage AI by the end of 2025. Despite the increasing role of AI, business leaders are concerned due to:

- The bias in data and algorithms leading to erroneous outcomes in 85% of AI projects

- Authorities like the EU planning to add AI regulations. Similar regulations like the GDPR can cost 4% of a firm’s worldwide annual revenue in case of infringements. 1

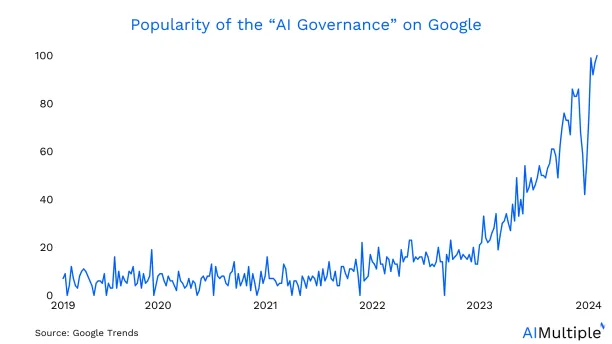

One recent answer to this problem is the adoption of AI governance tools (See Google Trends Graph). These tools can help deliver ethical and responsible AI. However, choosing an AI governance tool can be challenging for business leaders and data scientists since

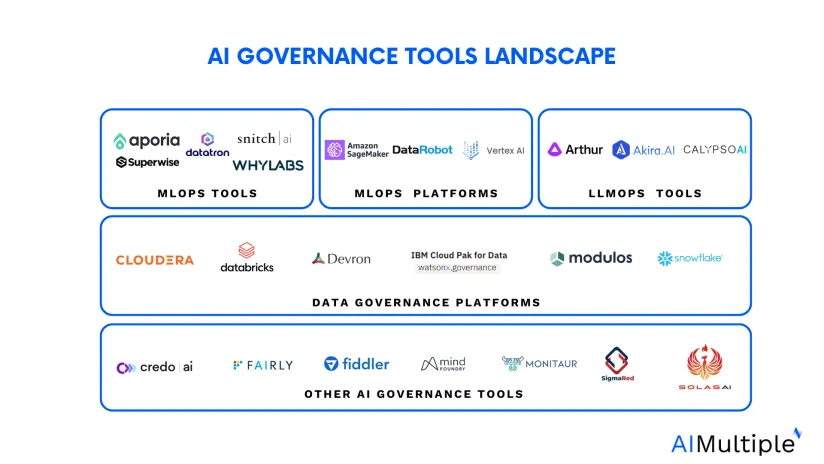

- The AI governance software landscape is complex

- AI governance solutions are provided in MLOps, LLMOps tools and data platforms.

Therefore, this article provides an comprehensive view of AI governance software, enabling data-driven decision-making.

Compare AI governance software

We provide a list of AI governance solutions within a framework to highlight each tool’s focus area. Depending on an organization’s AI initiatives and governance needs, they can select tools from these categories to build a robust AI governance strategy that aligns with their goals and responsibilities.

Other AI governance tools

These tools tend to focus on an aspect of AI governance, unlike platforms that manage the entire AI lifecycle. Such tools can be useful for small-scale projects or best-of-breed approaches. For example, they can focus on ensuring that AI systems comply with industry regulations and security standards. They help organizations mitigate AI risk by:

- Implementing security measures

- Staying in line with regulatory requirements and laws

- Managing model documentation.

Some of these tools include:

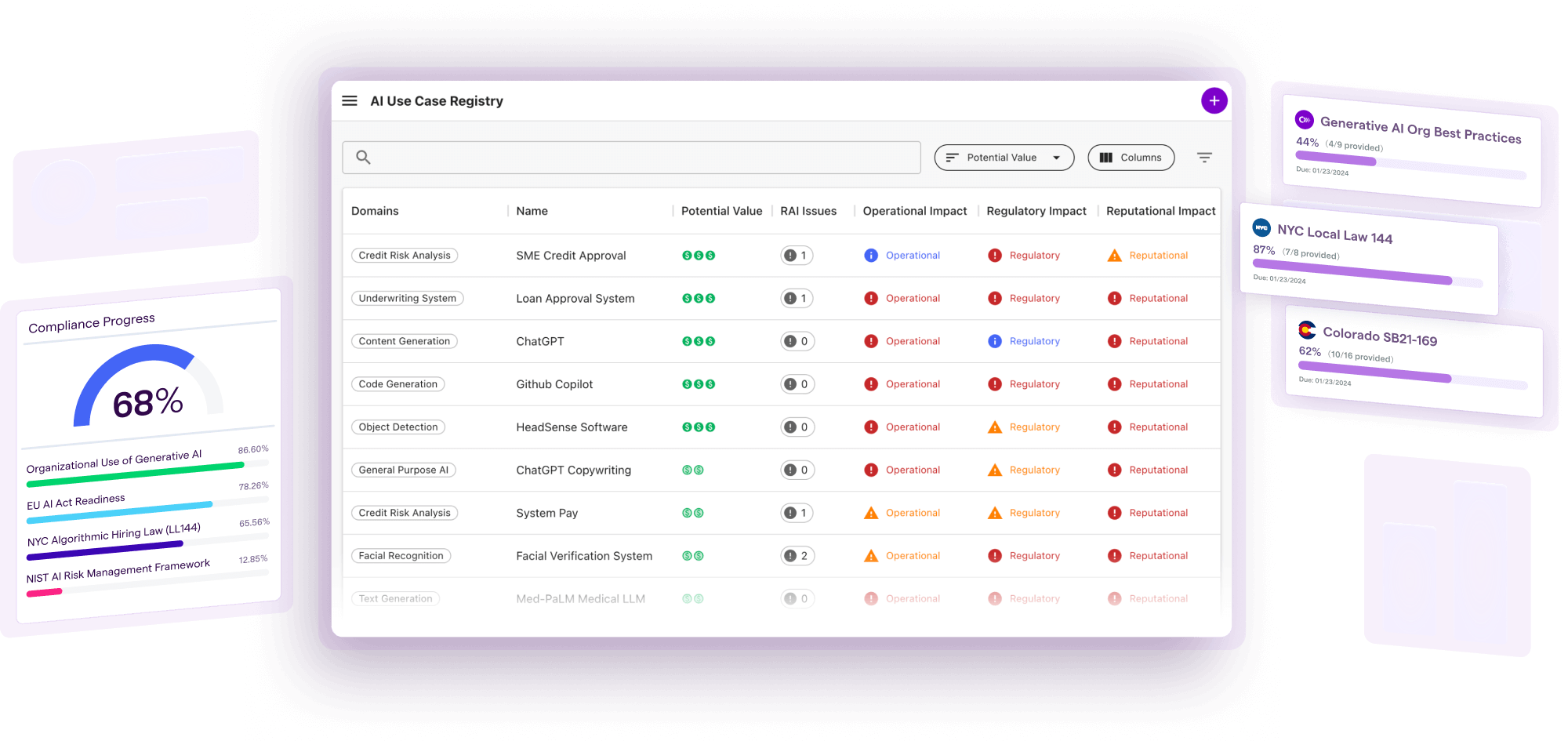

1.) Credo AI: Delivers AI model risk management, model governance and compliance assessments with an emphasis on generative AI to facilitate the adoption of AI technology.

2.) Fiddler AI: An AI observability tool that provides ML model monitoring and relevant LLMOps and MLOPs features to build and deploy trustable AI, including generative AI.

3.) Fairly AI: Continuously monitors, governs and audits models to reduce risk and improve compliance.

4.) Mind Foundry: Monitor and validate AI models, maintain transparency in decision-making, and align AI behavior with ethical and regulatory standards, fostering responsible AI governance.

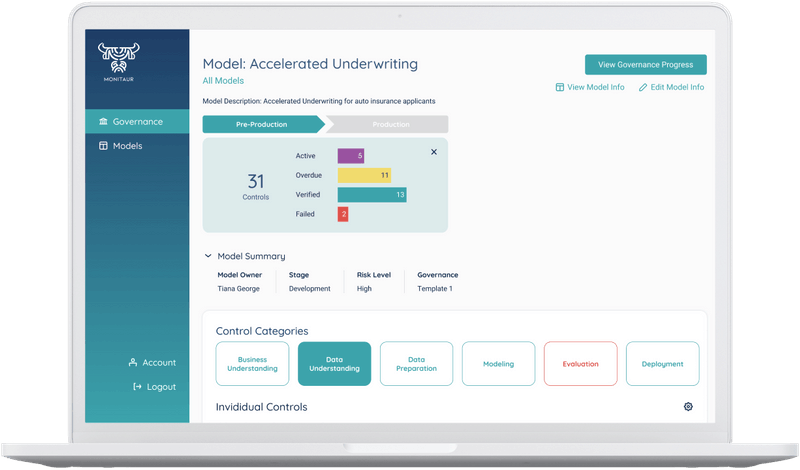

5.) Monitaur: Focuses on model monitoring for AI governance.

6.) Sigma Red AI: Detects and mitigates biases, ensuring model explainability and facilitating ethical AI practices.

7.) Solas AI: Checks for algorithmic discrimination to increase regulator and legal compliance.

Data Governance platforms

Data governance platforms contain various tools and toolkits primarily focused on data management to ensure the quality, privacy and compliance of data used in AI applications. They contribute to maintaining data integrity, security, and ethical use, which are crucial for responsible AI practices.

Some of these platforms can help check compliance and overall AI lifecycle management. These platforms can be valuable for organizations implementing comprehensive AI governance frameworks. Here are a few examples:

1.) Cloudera: A hybrid data platform that aims to improve the quality of data sets and ML models, focusing on data governance.

2.) Databricks: Combines data lakes and data warehouses in a platform that can also govern their structured and unstructured data, machine learning models, notebooks, dashboards and files on any cloud or platform.

3.) Devron AI: Offers a data science platform to build and train AI models and ensure that models meet governance policies and compliance requirements, including GDPR, CCPA and EU AI Act.

4.) IBM Cloud Pak for Data: IBM’s comprehensive data and AI platform, offering end-to-end governance capabilities for AI projects:

6.) Snowflake: Delivers a data cloud platform that can manage risk and improve operational efficiency through data management and security.

MLOps platforms

MLOps (Machine learning operations) platforms include a wide range of tools and infrastructure to manage the entire machine learning lifecycle and assist model governance.

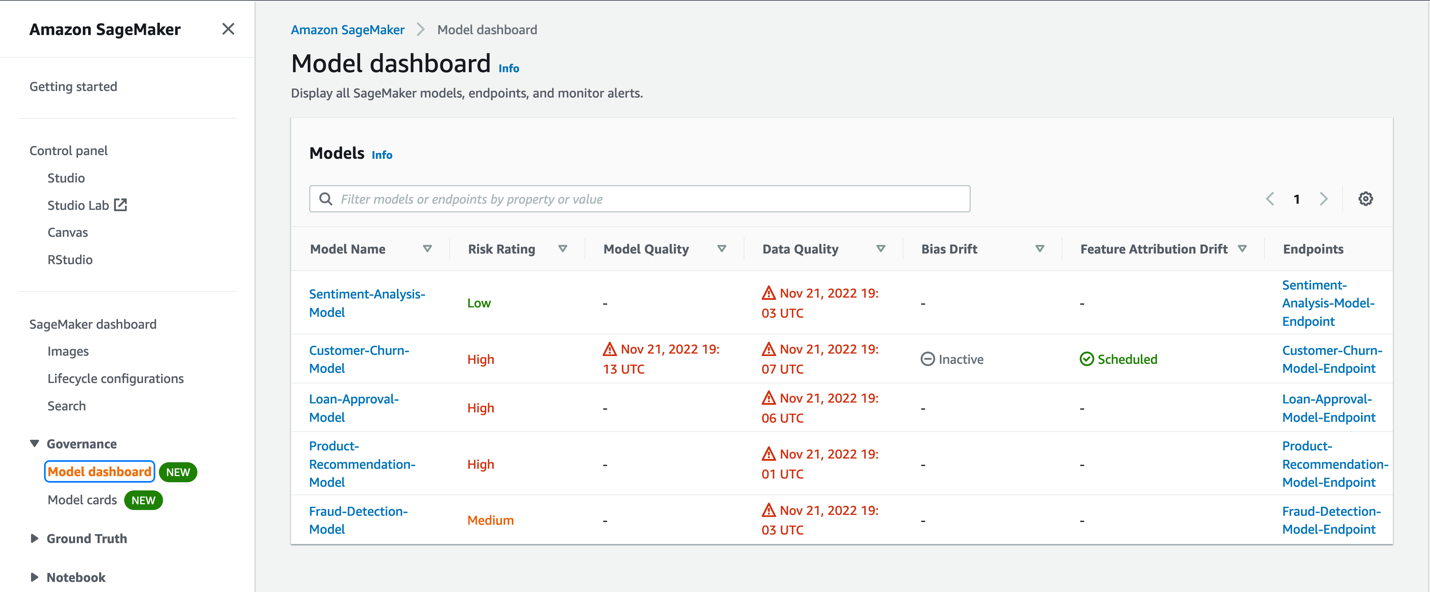

1.) Amazon Sagemaker: A fully managed machine learning service provided by Amazon Web Services (AWS). It simplifies the process of building, training, and deploying machine learning models, considering AI governance practices.

2.) Datarobot: Delivers a single platform to deploy, monitor, manage, and govern all your models in production, including features like trusted AI and ML governance to provide an end-to-end AI lifecycle governance.

3.) Vertex AI: Offers a range of tools and services for building, training, and deploying machine learning models with AI governance techniques, such as model monitoring, fairness, and explainability features.

Compare more MLOPs platforms in our data-driven and comprehensive vendor list.

MLOps tools

MLOps tools are individual software tools that serve specific purposes within the entire machine learning process. For example, MLOps tools can focus on ML model development, monitoring or model deployment. A data science team can deliver responsible AI products by applying these tools to machine learning algorithms to:

- Monitor and detect biasses

- Check for availability and transparency

- Ensure ethical compliance and data privacy.

Some of these tools include:

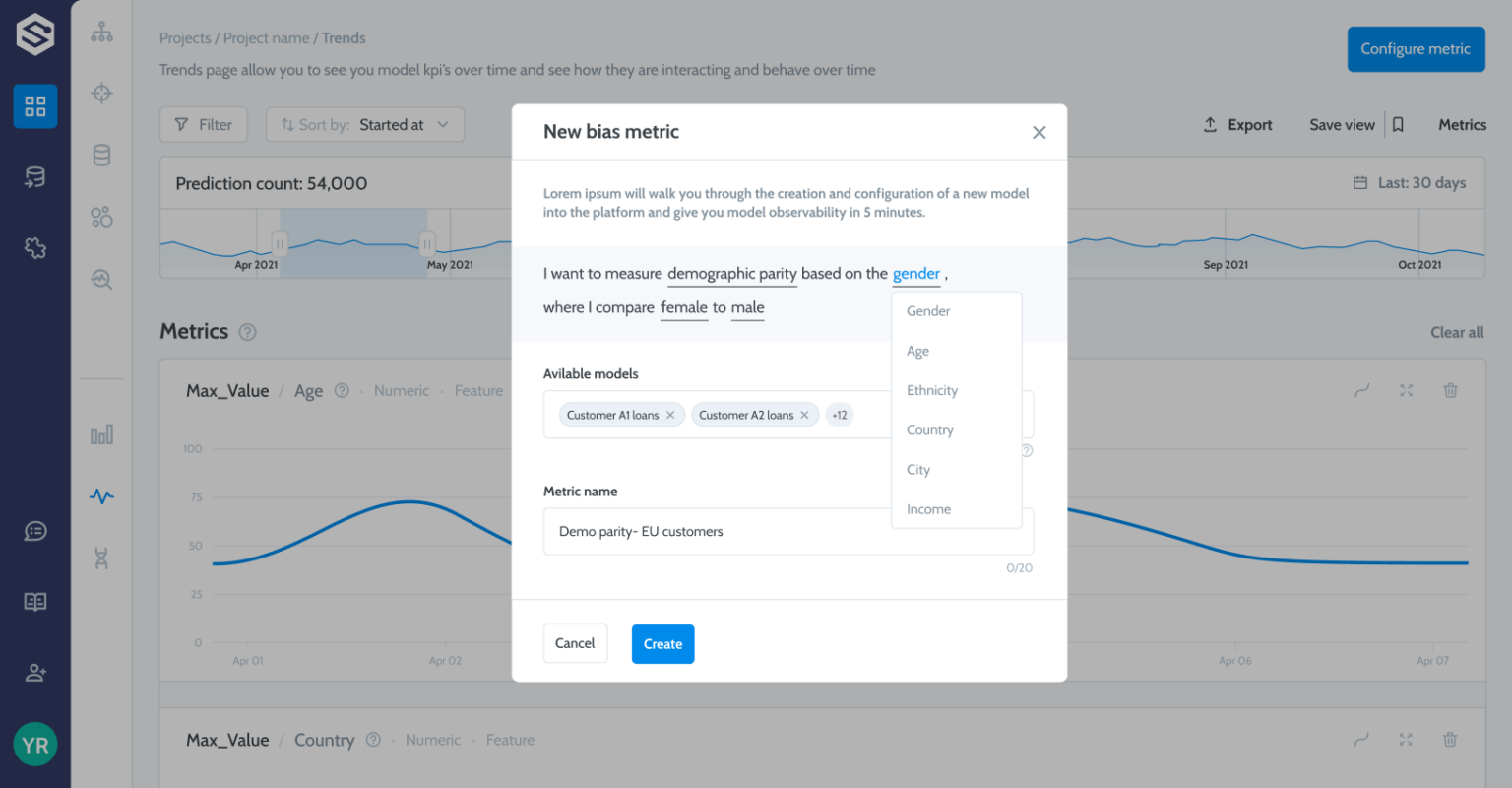

1.) Aporia AI: Specialized in ML observability and monitoring to maintain the reliability and fairness of their machine learning models in production. It employs model performance tracking, bias detection, and data quality assurance.

2.) Datatron: Provides visibility into model performance, Enables real-time monitoring, and Ensures compliance with ethical and regulatory standards, thus promoting responsible and accountable AI practices.

3.) Snitch AI: An ML observability and model validator which can track model performance, troubleshoot and continuously monitor.

4.) Superwise AI: Monitor AI models in real-time, detect biases, and explain model decisions, thereby promoting transparency, fairness, and accountability in AI systems.

5.) Why Labs: An LLMOps tool that monitors LLMs data and mode to identify issues.

LLMOps tools

LLMOps tools include LLM monitoring solutions and tools that assist some aspects of LLM operations. These tools can deploy AI governance practices in LLMs by monitoring multiple models and detecting biases and unethical behavior in the model. Some of them include:

1.) Akira AI: Runs quality assurance to detect unethical behavior, bias or lack of robustness.

2.) Calypso AI: Delivers monitoring considering control, security and governance over generative AI models.

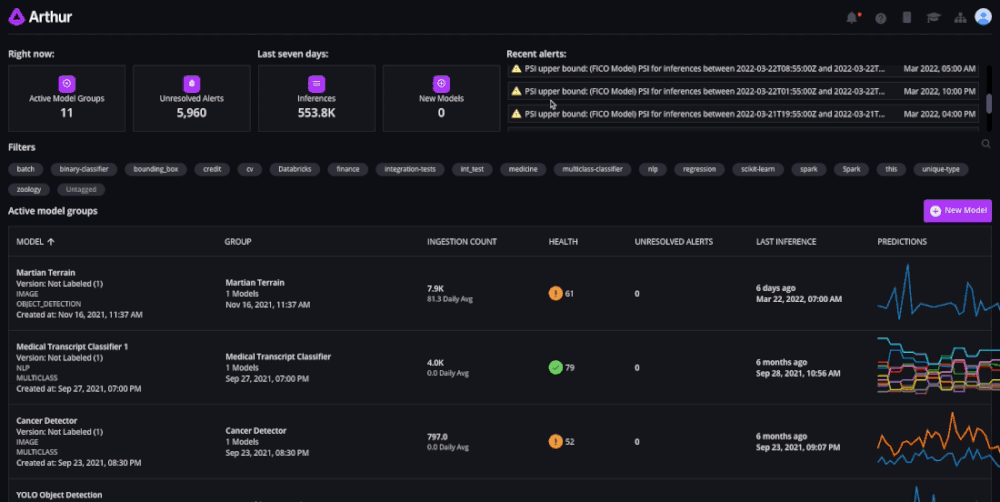

3.) Arthur AI: It tests LLMs, computer vision and NLPs (natural language processing) against established metrics to

Compare more LLMOps tools in our data-driven and comprehensive vendor list.

Disclaimers

This is an emerging domain, and most of these tools are embedded in platforms offering other services like MLOps. Therefore, AIMultiple has not had a chance to examine these tools in detail and relied on public vendor statements in this categorization. AIMultiple will improve our categorization as the market matures.

Products are sorted alphabetically on this page since AIMultiple doesn’t currently have access to more relevant metrics to rank these companies.

The vendor lists are not comprehensive.

What is AI governance?

AI governance refers to establishing rules, policies, and frameworks that guide the development, deployment, and use of artificial intelligence technologies. It aims to ensure ethical behavior, transparency, accountability, and societal benefit while mitigating potential risks and biases associated with AI systems.

Why do we need AI governance?

AI governance serves multiple essential purposes:

Ethical and Responsible AI: Ensures AI systems are designed, trained, and used ethically, preventing biased or harmful outcomes.

Transparency and Accountability: Promotes transparency in AI algorithms and decisions, making developers and organizations accountable for AI actions.

Data Privacy and Compliance: Helps organizations comply with data privacy regulations like GDPR and HIPAA, ensuring that data is collected and used legally and ethically.

Risk Mitigation: Identifies and mitigates various risks associated with AI, including legal, financial, and reputational risks, before they lead to negative consequences.

Fairness and Equity: Detects and rectifies bias in AI models, ensuring fair treatment of all individuals and groups.

Model Performance and Reliability: Continuously monitors AI models to maintain reliability, reducing errors and improving user satisfaction.

Public Trust: Builds public trust in AI technologies by emphasizing ethical behavior and transparency.

Alignment with Organizational Values: Allows organizations to align AI practices with their mission and values, demonstrating a commitment to ethics and responsibility.

Competitive Advantage: Ethical AI and responsible governance can provide a competitive edge by attracting customers, partners, and investors who value ethical AI solutions.

Key AI governance techniques & features

AI governance software employs common techniques to streamline building and deploying AI/ML models, such as:

Explainability and interpretability: AI governance software employs visualizations and explanations for AI model outputs to provide insights into how AI models make decisions. These tools allow users to understand and predict complex model behavior.

Transparency and accountability: AI governance provides clear documentation of model training data and processes, which enables monitoring of model decisions for accountability.

Fairness and bias detection: AI governance practices mainly focus on identifying and quantifying biases in AI models and data. For example, AI governance tools can monitor model performance across different demographic groups, allowing to mitigate biases in real-time or during training. Two main ways to detect bias in the model is to ensure compliance with ethics and law:

Ethical AI compliance: AI governance primarily aligns AI behavior with ethics by implementing guidelines and constraints. As a result, a data scientist can customize AI behavior to avoid harmful and offensive outputs of AI systems.

Regulatory compliance: A major AI governance practice is to ensure adherence to legal and regulatory requirements, meet data privacy and security standards and help business users comply with industry-specific regulations.

Model lifecycle management: Once a model is ready, AI governance techniques can manage the deployment of the model in the production environment by monitoring models for drift, degradation, or unexpected behavior. Two features that can facilitate AI deployment include:

Model validation and testing: Some AI governance tools can contain model validator features to test and verify models against benchmark datasets. Deploy these tools before production to detect potential issues.

Model risk management: AI governance techniques provide insights to assess and mitigate risks for AI systems.

Continual monitoring and auditing: Another common practice is tracking the model performance in production and behavior to ensure compliance and reliability in AI systems.

How to select the right AI governance software?

1. Identify your objective and scale: Consider the scale of your AI initiatives and the types of AI models and applications you are developing.

2. Research and evaluate available tools in the market:

– Look for vendors that specialize in the areas most relevant to your needs.

– Create a shortlist of promising tools based on their features, capabilities, and user reviews.

3. Benchmark the shortlisted tools based on the following:

– Each tool’s features: Assess its ability to detect bias, ensure data privacy, provide transparency, and monitor compliance.

– Ease of integration: Assess how well the AI governance tool integrates with your existing AI development and deployment pipeline.

– Compatibility with your organization: Check for compatibility with the programming languages, frameworks, and platforms you use for AI development. Ensure the tool can work seamlessly with your data sources, storage solutions, and cloud providers.

– User-friendly interface: How intuitive the tool is for seamless interaction.

– Customization and flexibility: The extent to which the tool can be customized to match your requirements, allowing you to adjust settings and configurations.

– Scalability: Consider the tool’s scalability to accommodate your organization’s growth in AI initiatives, such as increasing data volumes and workloads as your projects grow.

– Quality of vendor support: Investigate the level of customer support, response time and assistance provided.

– Training and resources: Review how comprehensive is the documentation, tutorials, user guides, online sources and training materials. Remember that adequate resources to help your team learn how to use the tool effectively.

– Cost and budget: Evaluate the cost structure of the AI governance tool, including licensing fees, subscription costs, and implementation expenses. Calculate the long-term costs and benefits of the tool to ensure it provides value over time based on your financial resources.

– Data security and privacy: Check compliance with data protection regulations, including encryption and access controls. Ensure the security and confidentiality of sensitive information.

3. Seek free trial and proof of concept (if applicable): Conduct a trial or proof of concept (PoC) with the selected AI governance software. You may use real or simulated AI projects to assess how well the tool addresses your governance needs. Involve key stakeholders, data scientists, and AI developers in the PoC to gather feedback on usability and effectiveness.

Further reading

Explore more on AIOps, MLOps, ITOPs and LLMOps by checking out our comprehensive articles:

- Comparing 10+ LLMOps Tools: A Comprehensive Vendor Benchmark

- What is LLMOps, Why It Matters & 7 Best Practices in 2023

- Understanding ITOps in ’23: Benefits, use cases & best practices

- What is AIOPS, Top 3 Use Cases & Best Tools?

- MLOps Tools & Platforms Landscape: In-Depth Guide for 2023

Check out our data-driven vendor lists for more LLMOps tools and MLOps platforms.

If you still have questions and doubts, we would like to help:

External sources

- 1. “What are the GDPR Fines? GDPR.EU., Revisited September 6, 2023.

- 2. “AI governance search.” Google Trends. Revisited September 7, 2023.

- 3. “Model compliance dashboard.” Credo AI. Revisited September 7, 2023.

- 4. “Governance Dashboard.” Monitor AI. Revisited September 7, 2023.

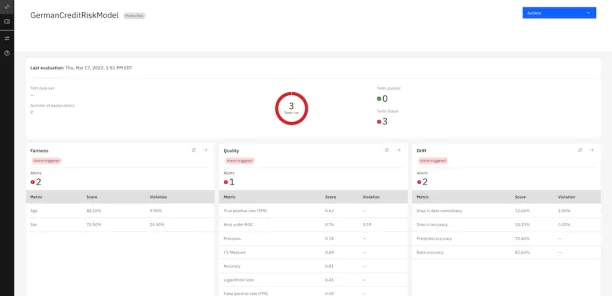

- 5. D’Angelo, S.; Sturdevant, M. (July 19, 2021) “Getting started with IBM openscale.” IBM. Revisited September 7, 2023.

- 6. “Sagemaker model dashboard.” Amazon. Revisited September 7, 2023.

- 7. “Models management dahsboard.” Aporia AI. Revisited September 7, 2023.

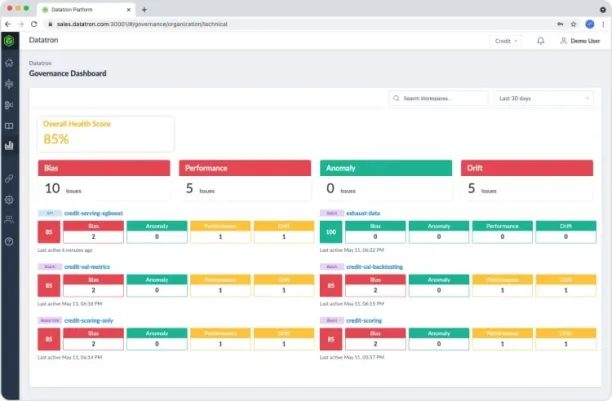

- 8. “Governance Dashboard.” Datatron. Revisited September 7, 2023.

- 9. “Platform dashboard for bias detection.” Superwise AI. Revisited September 7, 2023.

- 10. “Product dashboard.” Arthur AI. Revisited September 7, 2023.

Comments

Your email address will not be published. All fields are required.