Top 10 Innovative Data Center Automation Tools in 2024

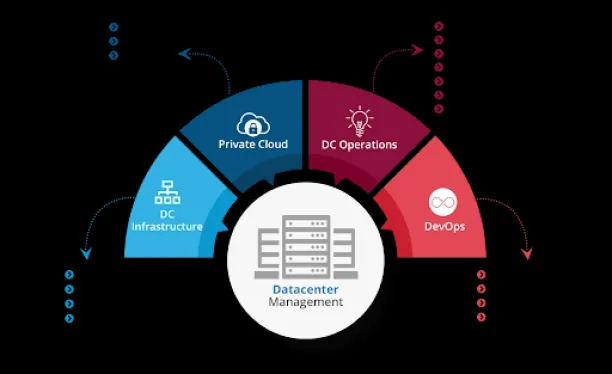

According to Gartner, ~80% of traditional data centers will be closed by 2025, and the remaining ~50% will increase their efficiency by ~30% with automation. Currently, there are more than 20 data center automation tools that can assist in the automation of data center processes (see Figure 1).

Picking a data center automation tool is critical for firms to have high-quality data and data-driven decisions. In this article, we aim to inform IT professionals about the top 7 data center automation tools and their distinct features to support them in making decisions about data center automation tools.

Comparison of Top 10 Data Center Automation Tools

| Vendor | Rating* |

|---|---|

| ActiveBatch | 4.6/5 based on 330 reviews |

| Redwood RunMyJobs | 4.6/5 based on 197 reviews |

| Tidal Workload Automation | 4.6/5 based on 81 reviews |

| OpCon Workload Automation | 4.6/5 based on 78 reviews |

| Fortra’s JAMS | 4.5/5 based on 223 reviews |

| AWS Batch | 4.4/5 based on 118 reviews |

| AutoSys | 4.3/5 based on 45 reviews |

| Control-M | 4.2/5 based on 159 reviews |

| IBM Workload Automation | 4.0/5 based on 32 reviews |

* Sorted with sponsors at the top and the rest sorted according to average rating. Ratings are based on Capterra, Gartner, G2, Peerspot and TrustRadius.

1. ActiveBatch ensures cloud data center efficiency securely

One of the most important aspects of a well-functioning data center is timely data delivery to the departments or clients. However, operations across cloud and virtual machine data centers can raise security concerns since integrating systems may provide access to a group of staff who are not entitled to access them. ActiveBatch integrates data centers on-premises, cloud, and hybrid environments at a single interface to securely support workload management in data centers.

For example, Vero Skatt, a Finnish tax agency, used ActiveBatch to connect six environments into a central interface. They used ActiveBatch’s user permission feature to address security concerns to control user/group access to specific databases or applications. They expanded adherence to internal and external audit requirements while significantly reducing manual scripting and troubleshooting.

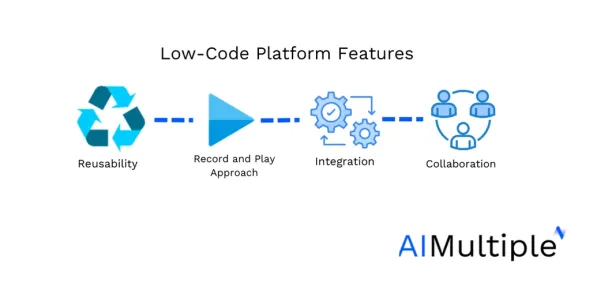

Vero Skatt’s operations benefitted additionally from ActiveBatch. Compared to traditional automation tools such as Windows Task Scheduler, ActiveBatch has a low-code interface with a drag-and-drop workflow designer and multiple workflow views (see Figure 2). ActiveBatch’s easy-to-use interface enabled their IT to develop diverse and complex automation tasks that would have been difficult to accomplish with traditional tools.

Figure 2: Benefits of low-code platforms.

2. Redwood RunMyJobs provides enterprise-wide visibility

Redwood RunMyJobs can be used for business process automation, DevOps automation, data warehouse management, security management, and more. RunMyJobs is a solution that enables transparency and compliance across the enterprise by streamlining cross-departmental workflow processes.

The ALSO Group, previously known as ALSO-Actebis, is a major distributor of IT and telecommunications products in Europe. With revenue exceeding €10.9 billion in 2020, it holds a prominent position in the industry. ALSO chose Redwood as a solution to enhance the speed of processing customer orders. By implementing the Redwood RunMyJobs solution within just one week, ALSO successfully integrates its warehouse application with its SAP operations, enabling them to swiftly handle incoming orders.

Through Redwood’s modular automation, ALSO was able to create automation processes once and utilize them repeatedly. This resulted in a significant reduction from their 46,000 SAP job definitions to only 570 Redwood scripts, including 140 job sets and 300 independent jobs. Previously, they required a team of six specialized SAP Basis administrators to manage these processes. However, with the implementation of Redwood, they now only need a single administrator, allowing the team to allocate their efforts toward other important tasks.

Redwood’s services are trusted and used by organizations like Arthex, Avaya, Epson, and AMD. You can check their offerings in the video below:

3. Stonebranch WLA tool provides a centralized platform for mass automation

Managing mass workloads in different environments can be challenging for traditional data center automation tools. Older data center tools may have less operational capacity than modern ones, and they may cause database integration issues. Modern workload automation (WLA) tools can process many business operations across multiple platforms, maximize savings, and increase performance and scalability.

For example, ITERGO, an IT service company for the ERGO insurance group, needed an alternative to their traditional WLA tools to manage their mass data center with about 15 million transactions per day and desired reliability in data transactions.

The Stonebranch WLA tool offered them a single unified platform, Universal Automation Center, which connected their Tivoli Workload Automation Scheduler, other schedulers, and their cloud-based databases with 38,000 users.

The platform provided the following features:

- A universal controller to manage all platforms in the data center.

- A universal agent remotely executes processes on various software.

- A universal data mover automating the data pipeline reliably and securely across your servers.

- A universal data mover gateway for secure data transfer to third-party businesses from the data center.

4. Control-M provides an organized outlook to enterprises

A flowing business operation may require a good orchestration of your applications and data flow in your data center. With your company’s growth, keeping this organization can become difficult for your IT team to manage manually or with traditional tools.

As KoçSistem grew into one of Turkey’s largest technology companies, its infrastructure team began to suffer due to a lack of employees and an overabundance of servers. They had difficulty initializing their servers to meet high-quality compliance standards and managing server patches causing server vulnerabilities.

Unlike traditional tools, the modern WLA tool Control-M provided patch and compliance management. Patch management aided them in their CI/CD pipeline by reducing the time spent in server DevOps operations such as downloading, analyzing, testing, and repairing patches from different vendors. Compliance management aided KoçSistem in better using its resources and achieved about a 100% patch compliance ratio.

5. JAMS’ PowerShell automation and relational job diagram

The data center administration is critical for your servers to communicate data across your business. However, disconnecting data centers with PowerShell and other software can be time-intensive and overwhelming for your IT team.

Thus, the JAMS job scheduler is built on the NET framework and can assist your IT team with PowerShell scripts. For example, Jupiter, a fund management firm, devoted significant time to monitoring its processes, security, and compliance.

JAMS run-time encryption, compliance trial, and various other features allowed their processes to generate approximately 36,000 from 1000 FTP and ETL processes across hundreds of servers.

In addition, JAMS enables IT teams to quickly understand workflows between their servers, make quick edits, and increase their efficiency, unlike old-fashioned automation tools. JAMS’ Relational Job Diagram provides a clear, graphical representation of the jobs running on servers, including their relationships, triggers, dependencies, and much more.

6. IBM WLA tool ensures quality in workflows

In traditional automation tools, the software or AI can occasionally make inaccurate estimates about their server and processing capacity resulting in inaccurate estimates of task completion. This can result in poor scheduling decisions that can cause delays and inefficiencies in the workflow of the data center.

IBM’s WLA tool contains a data advisor powered by AI that eliminates the possibility of the computer making inaccurate estimates. Data Advisor (AIDA) can detect anomalies in the overall workload or specific jobs. Thus, it can enable your IT team to accurately know the task completion time and report inaccuracies.

Also, the AI can use data from big data and machine learning and data analytics methods to compare its estimates of task completion time across external and internal servers to guide your IT team about the efficiency of your data center. Additionally, its user interface (UI) can offer valuable insights into data center efficiency as it can record, track, and analyze historical data from jobs and workstations.

7. OpCon reduces IT requests

Open Technology Solutions (OTS), a credit union service organization, was experiencing errors in their core servers with their old automation tool. The use of OpCon WLA tools in their servers nearly eliminated system downtime.

OpCon WLA tool’s feature, OpCon deploy, enables IT teams to update their servers while the business processes are in progress. In traditional methods, this would entail that some servers must be shut down, and business operations must halt while the update is being processed. OpCon WLA tool can be important in your DevOps toolbox for efficient server management.

8. Tidal Workload Automation manages CPU fails

Due to inefficiencies in scheduling and CPU management in data centers, servers can occasionally fail during data processing and distribution and cause delays. The innovative WLA tools can detect overloads in servers and CPU fails and they can direct the tasks to cloud-based and virtual machine environments to ensure that your service level agreement (SLA) is met.

For example, an insurance plan company having trouble processing the spikes in day-to-day (about 25,000 to 100,000 jobs per day) activities and more errors were occurring as the business and claim volume grew. Furthermore, the company had to submit the insurance plans on the next day at 9:00 to the government to avoid financial penalties.

Tidal WLA automation met the company’s requirements and allowed it to handle spikes ranging from 6,000 to 100,000 requests. Furthermore, since deploying Tidal in 2007, the company has not missed any SLAs.

9.AWS Batch accelerates speed to market

Arm Limited, a world-renowned provider of licensable computing technology for semiconductor corporations, has seen more than 200 billion chips based on its design manufactured and shipped by partners over the past 30 years as of February 2022. However, Arm’s on-site data centers couldn’t keep up with escalating engineering needs, leading the company to instigate drastic changes in 2016 to meet its forecasted growth over the following 5 to 10 years.

Transitioning from traditional data centers to Amazon Web Services (AWS) allowed Arm to construct scalable cloud-based solutions for operating EDA tasks. Arm has optimized its computing expenses, boosted engineering efficiency, hastened product launch times, and improved product quality through this approach. Moreover, Arm has leveraged AWS CPUs based on its architecture to design and verify new chips, further propelling its business success.

10.AutoSys Boosts insurance processes and data processing

Hanwha Life Insurance Co Ltd is a global life insurance provider with its main headquarters located in South Korea. Recognizing the need to enhance IT efficiency and reduce the burden of IT management tasks, Hanwha Life sought to streamline job scheduling through increased automation. Hanwha Life deployed AutoSys Workload Automation.

The solutions are currently utilized to schedule tasks across both mainframe and UNIX environments, specifically concerning the company’s accounting systems and data warehouse, managing approximately 8,000 jobs in total. Hanwha’s IT team uses intuitive online consoles, which allow them to categorize jobs by workload and server, as well as conveniently modify batch job configurations.

To learn further about workload automation, you can download our whitepaper:

To discover other tools that you can replace or pair with data center automation tools, read our data-driven and comprehensive articles and benchmarks on:

- IT Automation: Top Tools, Examples, Rationale & Benefits

- Top 12 IT Automation Software: Vendor Benchmarking

- 5 Reasons for Data Warehouse Automation

- Top 10+ Data Warehouse Automation Software

If you have any additional questions about best practices for data center automation, please contact us at:

Cem has been the principal analyst at AIMultiple since 2017. AIMultiple informs hundreds of thousands of businesses (as per similarWeb) including 60% of Fortune 500 every month.

Cem's work has been cited by leading global publications including Business Insider, Forbes, Washington Post, global firms like Deloitte, HPE, NGOs like World Economic Forum and supranational organizations like European Commission. You can see more reputable companies and media that referenced AIMultiple.

Throughout his career, Cem served as a tech consultant, tech buyer and tech entrepreneur. He advised businesses on their enterprise software, automation, cloud, AI / ML and other technology related decisions at McKinsey & Company and Altman Solon for more than a decade. He also published a McKinsey report on digitalization.

He led technology strategy and procurement of a telco while reporting to the CEO. He has also led commercial growth of deep tech company Hypatos that reached a 7 digit annual recurring revenue and a 9 digit valuation from 0 within 2 years. Cem's work in Hypatos was covered by leading technology publications like TechCrunch and Business Insider.

Cem regularly speaks at international technology conferences. He graduated from Bogazici University as a computer engineer and holds an MBA from Columbia Business School.

To stay up-to-date on B2B tech & accelerate your enterprise:

Follow on

Comments

Your email address will not be published. All fields are required.