We benchmarked the leading Walmart scraper APIs, analyzing 2 batches of requests to 200 URLs from product and search pages during Dec 2024 and Jan 2025, totaling 2,000 requests:

| Provider | Focus | |

|---|---|---|

1. |  Bright Data Bright Data |

Web scraping APIs at cost-effective prices with detailed results |

2. |  Oxylabs Oxylabs |

High success rate and response times |

3. |  Smartproxy Smartproxy |

Web scraping APIs with affordable entry prices |

4. |  Apify Apify |

Web scraping APIs with community-driven approach |

This guide provides step-by-step instructions on how to scrape Walmart’s product pages using Python and various other tools.

A quick comparison of top Walmart scraping tools

| Vendors | Starts from/mo | Free trial |

|---|---|---|

| Bright Data | $500 | 7 days |

| Oxylabs | $49 | 7 days |

| Smartproxy | $29 | 3K free requests |

| Nimble | $150 | 7 days |

| Apify | $30 | 3 days |

| ScraperAPI | $149 | 7 days |

| Zyte | $100 | $5 free for a month |

Walmart web data benchmark results

Check out the average number of fields returned by scraping APIs in Walmart web pages and median response times (for definitions, see methodology):

Cost comparison of top Walmart scraper APIs

Data fields extracted from Walmart via scraping APIs

Product pages

Check which data fields each provider supports:

| Data Field | Bright Data | Apify | Oxylabs | Smartproxy | Zyte |

|---|---|---|---|---|---|

| Product specifications | ✅ | ❌ | ✅ | ✅ | ❌ |

| Product reviews | Top negative and positive review | ❌ | ❌ | ❌ | ❌ |

| Review tags | ✅ | ❌ | ❌ | ❌ | ❌ |

Notes:

- ✅ indicates that the provider supports the data field. The provider does not support the indicated data field (❌). Let’s use an example to clarify ✅s and ❌s: For this product, Bright Data offers product specifications field including brand, or age range. Since Apify doesn’t offer this information, the above chart shows ❌ under Apify.

- “Top Reviews” are the most prominent reviews accessible.

- All benchmarked APIs provide the following data points:

- Product page: Title, URL, price, currency, image URL, review count, availability, breadcrumb, rating.

- Search page: Title, URL, brand, price, currency, image url, category name, product ID.

Search pages

| Data Field | Bright Data | Apify | Oxylabs | Smartproxy | Zyte |

|---|---|---|---|---|---|

| Product Ingredients | ✅ | ❌ | ❌ | ❌ | ❌ |

| Category URL | ✅ | ❌ | ❌ | ❌ | ❌ |

| Product Specifications | ✅ | ❌ | ❌ | ❌ | ❌ |

| Related pages | ✅ | ❌ | ❌ | ❌ | ❌ |

| Description | ✅ | Short description | ❌ | ❌ | ❌ |

| Review tags | ✅ | ❌ | ❌ | ❌ | ❌ |

| Reviews | Name, title, review text | ❌ | ❌ | ❌ | ❌ |

| Number of reviews | ✅ | ❌ | ✅ | ✅ | ❌ |

What is Walmart scraping, and why is it essential?

Walmart scraping is the process of collecting data from Walmart’s product or category web pages. You can extract information, such as price data, product titles, descriptions and images.

It is possible to scrape Walmart using a no-code eCommerce web scraper or in-house web scraper. You can create a Walmart scraper for data collection using any programming language, including Python web scraping libraries such as Requests and Beautiful Soup. Whether you’re using an off-the-shelf or in-house scraper, it’s crucial to follow web scraping ethics and stay within legal boundaries to avoid any potential legal issues.

Scraping eCommerce product pages can provide a wealth of valuable data that can assist businesses in the following ways:

- Develop a more effective pricing strategy

- Identify market gaps for growth

- Monitor changes in pricing and promotions in real -time.

How to scrape Walmart product data & fetch product page

- Identify the product or category page that contains the data you need.

- Determine what type of data you want to retrieve from Walmart, such as product prices, images or reviews.

- Inspect the page source of the product page (Figure 1).

Figure 1: Shows how to inspect the source code of the HTML content

- You can locate the HTML elements containing the data you need, such as product title or price, using the find() or find_all() methods.

- You can extract the text content of the element using the text attribute. For example, if you intend to scrape product title data from a Walmart product page, search for an h1 tag that contains the product title.

If you are using Beautiful Soup, you can send a GET request to the Walmart product page and use the find() method to search for the first h1 tag on the page containing the product name data. Then, you can extract the text content of the h1 tag using the get_text() method. - To scrape multiple Walmart product pages, you can use a loop to iterate over a list of URLs and scrape each page in turn. It is essential to bear in mind that you need to put time breaks between your requests to simulate human-like behavior. You can use the time. sleep() function to introduce time breaks between requests.

- If you are working with JSON data, you can load and parse it into Python objects using the JSON module. You can use the pd.json_normalize() function to flatten all JSON data into an actual data frame, making it easier to analyze and manipulate.

- You can use the built-in CSV module or third-party libraries such as Pandas or NumPy to export data to a CSV or other data formats, such as JSON or Excel.

Sponsored

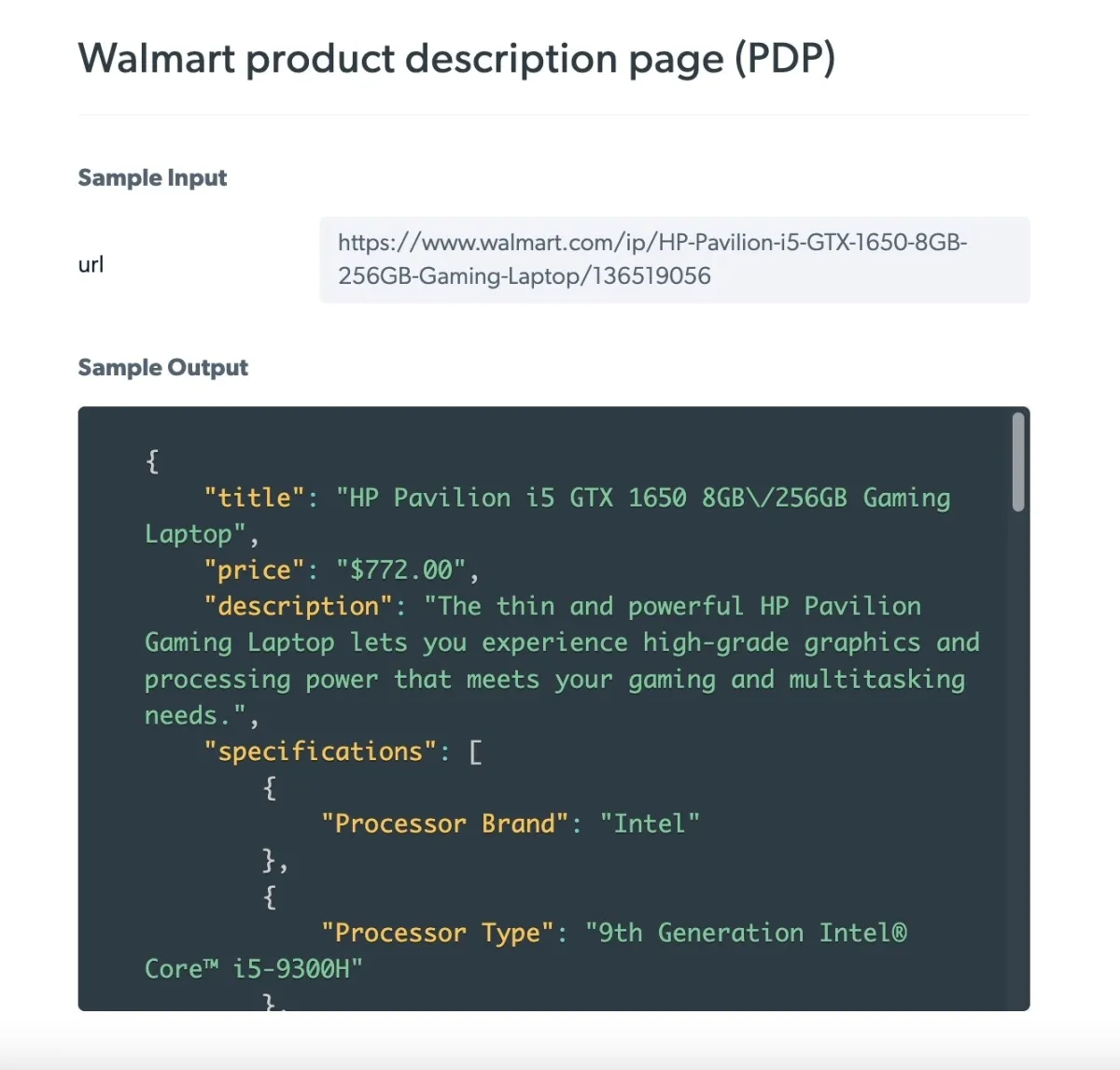

Bright Data’s Walmart Scraper enables businesses and individuals to automate collecting data from Walmart’s website while avoiding anti-scraping measures. Check out the sample examples of scraping Walmart product pages obtained with the Walmart data collector.

Figure 2: Product data collection from Walmart by URL

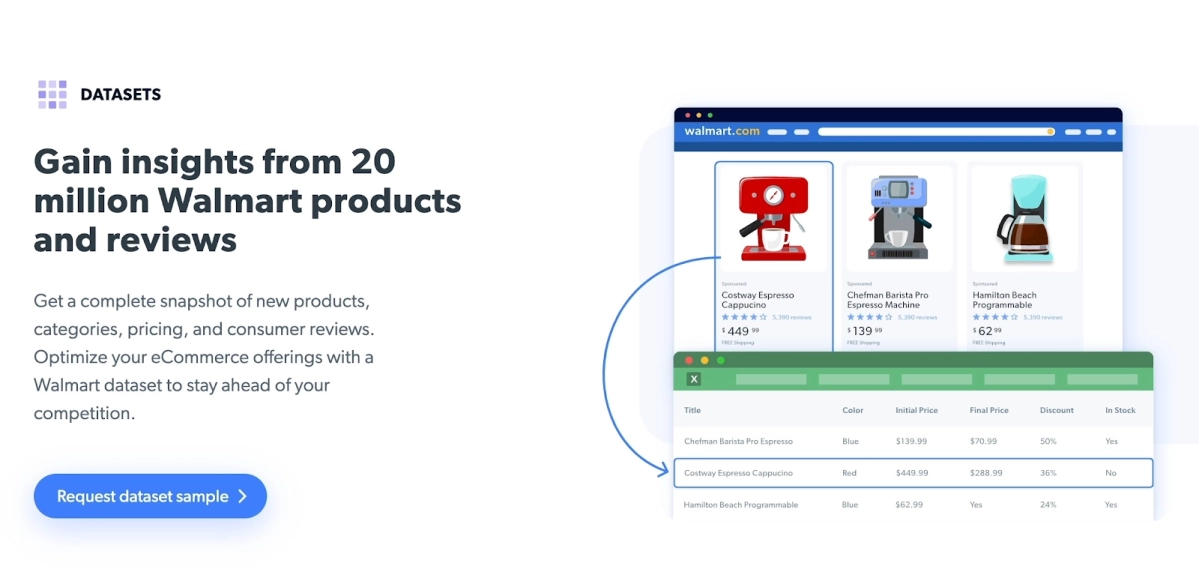

You can skip the web scraping process and quickly access the needed data using a ready-made dataset. Bright Data’s Walmart Datasets save time and resources; you don’t have to invest in developing your web scraping solutions.

Figure 3: Bright Data Walmart Datasets

Scraping Walmart Data with Python: A Step-by-Step Guide

- Set up your Python environment:

- You must first download and install Python; you can download the latest version of Python from the official website.

You can also download BeautifulSoup 4 from the official website. However, installing BeautifulSoup with the pip command is usually easier and faster.

Once you have imported the library, you can start using its functions for pulling data. For instance, you can make a request to walmart.com using Requests’ HTTP requests, such as PUT, DELETE, and HEAD. However, it does not support data parsing. You can use Beautiful Soup to parse HTML of the web page. Beautiful Soup is compatible with the built-in HTML parser.

- Make a request:

- Specify the URL of the product page you want to fetch. Data fetching sends a request to the destination and receives receives a response containing the requested data.

- Send a request to the target URL using the installed library. For example, you can use the requests.get() method to send a GET request to the specified Walmart product page.

- Parse the HTML content of the response using an HTML parser, such as Beautiful Soup or a third-party Python parser, like HTML5lib and lxml.

Best practices for Walmart web scraping

Scraping Walmart can be challenging due to the website’s anti-scraping techniques, including CAPTCHA challenges, IP blocking, and user agent detection. It is necessary to mimic the headers from your actual browser to avoid detection by the website you are scraping.

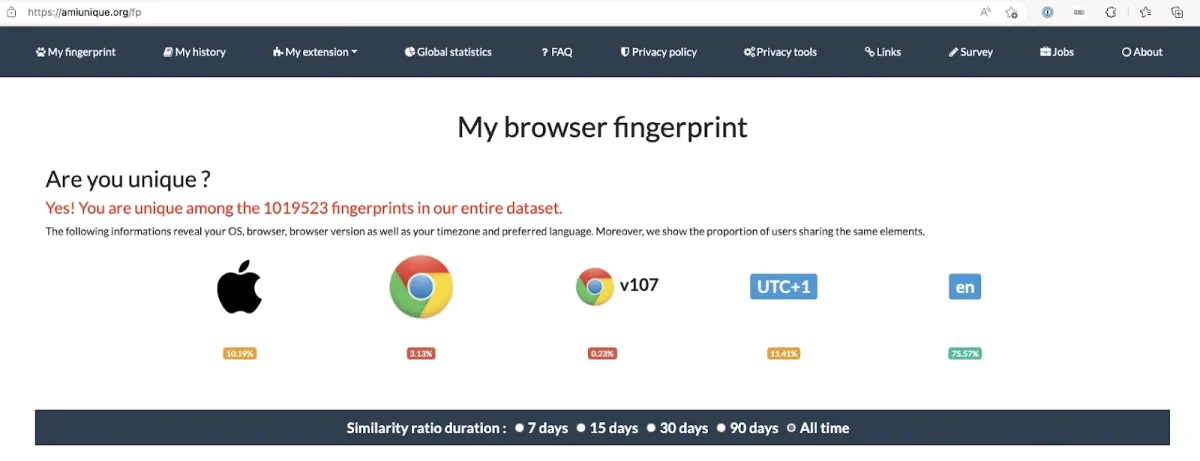

For example, when you send a request to the target server, you make your device information, such as your browser and operating system, available to the target website. The website will identify your activity as a script or an automated computer program such as a web crawler and block your IP address from accessing web services.

If you want to find out what is in your browser fingerprint and how unique your browser fingerprint is, you can visit AmIUnique (Figure 4).

Figure 4: An example of a browser fingerprint

Note that mimicking headers alone may not be enough to circumvent all anti-scraping measures. Therefore, additional measures such as rotating IP addresses (e.g. residential proxies) or using headless browsers may be required. In this scenario, there are a few best practices to consider:

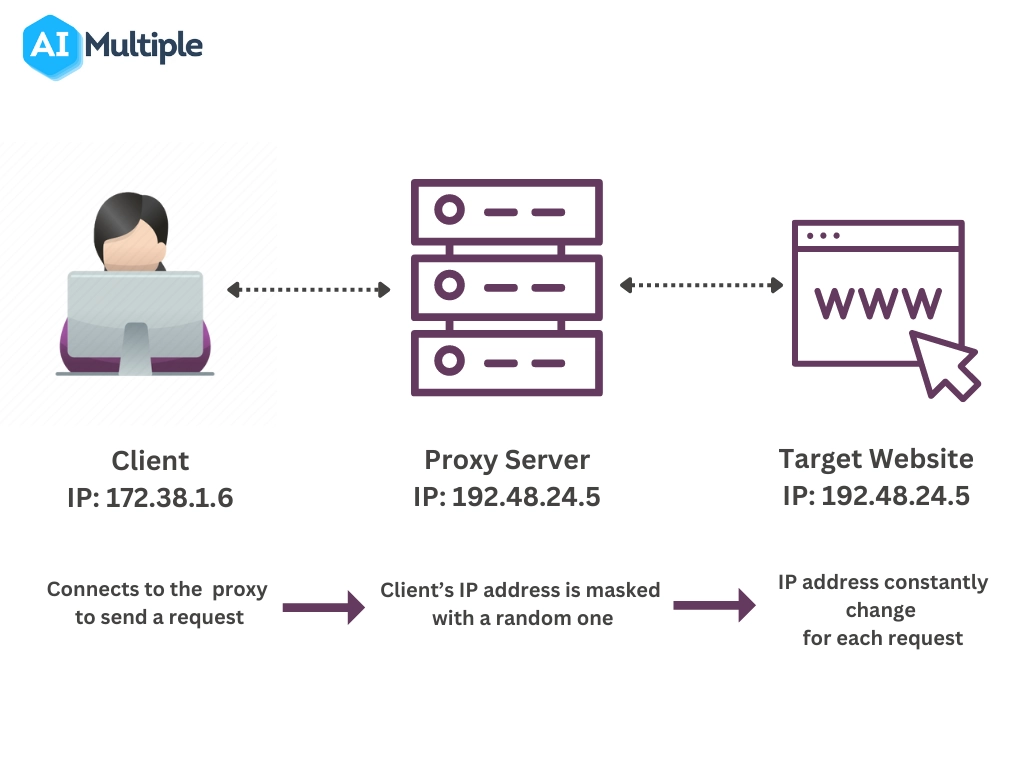

- Rotating proxies: Walmart may block requests originating from specific IP addresses. You can use a rotating proxy server to bypass these restrictions. Rotating proxies allow users to change their IP address with each connection request, making it more difficult for Walmart to track and block your requests (Figure 5).

Figure 5: An overview of how a rotating proxy server works

Sponsored

You can use proxies in conjunction with Walmart web scraping tools to speed up the data collection process. Oxylabs’ rotating ISP proxies assign a new IP address from the IP pool of datacenter and residential proxies. This makes your scraper appear more human-like and makes the scraping process less detectable.

- Including a User-Agent: The ‘User-Agent’ header can help you avoid being detected as a web scraper by Walmart or other eCommerce websites implementing anti-scraping techniques. You can include a User-Agent header in your script using the Requests library in Python to mimic the headers from your actual browser. It is essential to ensure that your data collection activities are legal and ethical, and that you are not violating Walmart’s terms of service.

- CAPTCHA solving: CAPTCHAs prevent automated scripts from accessing and scraping website content (Figure 6). To automate the process of solving CAPTCHAs, you can use a CAPTCHA-solving library to automate the process of solving CAPTCHAs, such as Pytesseract, or third-party CAPTCHA-solving services, such as Bright Data’s Web Unlocker.

Figure 6: An example of image-based CAPTCHA challenge

- Headless browsers: A headless browser, such as Selenium or Puppeteer, can simulate real user interactions, making it more difficult for websites employing anti-scraping measures, such as Walmart, to detect that a user is using an automated script.

Methodology

We included 100 URLs from both Walmart product and Walmart search pages for each domain. If a web scraper consistently returns successful results over 90% of the time for a specific page type (e.g., Walmart search pages), and the correctness of the results is validated through random sampling of 10 URLs, we list that provider as a scraping API for that page type.

For more details, see our web scraping API benchmark methodology.

Comments

Your email address will not be published. All fields are required.