We benchmarked 15 leading multimodal AI models on visual reasoning using 200 visual-based questions. The evaluation consisted of two tracks: 100 chart understanding questions testing data visualization interpretation, and 100 visual logic questions assessing pattern recognition and spatial reasoning. Each question was run 5 times to ensure consistent and reliable results.

Visual reasoning benchmark

See our benchmark methodology to learn our testing procedures.

gemini-3.1-pro-preview and gemini-3-pro-preview lead the leaderboard. They are followed by gpt-5.2, kimi-k2.5 and gpt-5.2-pro which lead the next group of models. While most models perform well on data-driven tasks, a gap remains for llama-4-maverick in connecting visual inputs with logical steps.

Visual logic

Visual logic requires pattern recognition and spatial reasoning. gemini-3.1-pro-preview leads visual logic test, showing highest performance in abstract reasoning tasks. Many models show a decrease in performance when compared to results in chart analysis. llama-4-maverick shows a limitation in these tasks.

Chart understanding

Models demonstrate better proficiency in chart interpretation than in visual logic. gemini-3.1-pro-preview has the highest score in chart understanding tests , followed closely by gemini-3-pro-preview and gemini-2.5-pro, showing strong ability to decode structured data and visualizations. claude-opus-4.6 and claude-sonnet-4.6 show higher results when interpreting charts compared to their logic scores. Data-driven visual tasks are more accessible to current multimodal models than pattern recognition.

Statistical reliability of visual reasoning performance (95% CI)

We calculated the 95% Confidence Intervals (CI) through 10,000 bootstrap resamples to define the margin of error for each model, showing the range within which their true performance likely falls.

Benchmark questions on where LLMs excel and struggle most

Chart question with the lowest LLM success rate

Figure 1: Bar chart showing Star Sales Volumes across 12 months with four clustered bars per month (1998-2000 data). Each month displays solid, white, and striped bars in close grouping.

Note: All charts were obtained from Hitbullseye.1

Question: If the sales of three consecutive years are steadily increasing or steadily decreasing, then it is called a steady trend. Which months show a steadily increasing trend across three consecutive years?

For example, in June 1999, Actual was lower than in 1998, showing a decrease, but the model incorrectly interpreted it as steadily increasing. Most models make the same mistake on this question.

When 4 bars are clustered together per month, models struggled with bar-to-year mapping and relative height perception. They could not accurately distinguish which striped/solid/white bar belonged to which year, leading to bars being read in the wrong order or to confusing their heights.

This revealed a fundamental limitation in visual-spatial reasoning: current models lacked the pixel-precise perception needed to correctly measure and sequence densely packed bars, leading to systematic misidentification of trends.

Chart question with the highest LLM success rate

Figure 2: Bar chart showing voter turnout percentages in Indian general elections from 1952 to 1998. One bar per election year with clear spacing between bars.

Question: The highest and lowest ever voter turnout (in percentage) were respectively in which years?

All models answered this question correctly. This success shows models excel at simple min-max identification, finding the tallest and shortest bars.

Unlike the clustered 4-bar groups, which are confusing, this chart has a single bar per year with clear spacing, making direct visual comparison straightforward. Models perform well on purely observational tasks that require no complex bar-to-category mapping.

Visual logic question with the highest LLM success rate

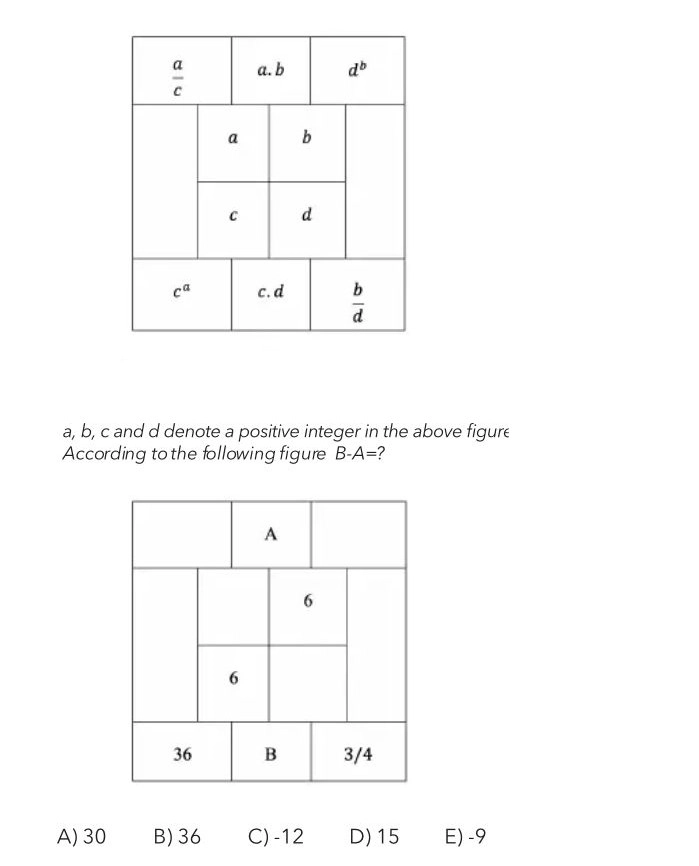

Figure 3: Two aligned 3×3 grids showing algebraic pattern matching. The top grid contains variables and their operations (multiplication, division, exponents). The bottom grid shows numerical values, with some cells filled (6, 36, 3/4) and two unknowns (A, B). The question asks to find B-A.

Success came from the clear mathematical pattern visible in the table structure (algebraic relationships like a×b, c×d). The simple grid layout, with no visual complexity, allowed models to focus solely on numerical inference and logical deduction.

Models excel when problems involve explicit mathematical patterns that can be solved through step-by-step reasoning, demonstrating their strength in symbolic logic and pattern recognition when visual distractions are minimal.

Visual logic question with the lowest LLM success rate

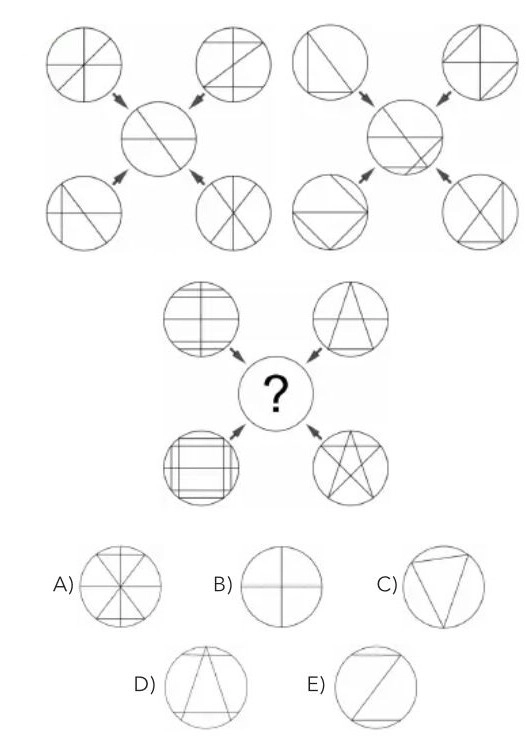

Figure 4: Pattern recognition puzzle with circles containing different internal line patterns and geometric shapes. Two example sequences with arrows shown at the top, followed by a question asking to complete the third sequence from five multiple-choice options.

The difficulty stems from requiring abstract visual pattern recognition, identifying geometric transformation rules across multiple examples.

This demands pure spatial reasoning to understand how shapes rotate, transform, and relate to each other. Models struggle with rule inference from visual sequences when no explicit numerical or textual guidance is available, only spatial patterns.

What is visual reasoning?

Visual reasoning is a model’s ability to interpret images, connect visual elements, and answer questions that require understanding both visual and textual information. This capability extends beyond simple object recognition to tasks like analyzing data visualizations, identifying spatial patterns, and understanding relationships between visual elements.

Our benchmark evaluated this through two distinct tracks to test different cognitive aspects: chart understanding, where models interpreted bar charts, line graphs, and scatter plots to assess their ability to extract structured information from data visualizations; and visual logic, where they tackled pattern recognition puzzles and spatial reasoning problems to measure abstract reasoning without explicit numerical guidance. This division reflects the fundamental distinction in how models process explicit data versus implicit patterns.

Models achieve visual reasoning through different architectural approaches. For example, the Cola framework coordinates multiple vision-language models where each provides captions and plausible answers, then a central LLM evaluates these options and selects the most accurate response.

Figure 5: Graph showing how Cola leverages a coordinative language model for visual reasoning.2

Another example is the CVR-LLM framework, which improves reasoning by converting images into context-aware descriptions using the CaID method and selecting relevant examples with the CVR-ICL procedure. This framework treats image information as text-based representations, enabling the LLM to analyze associations more effectively across various types of multimodal tasks.3

How visual reasoning works in LLMs

LLMs do not perceive images directly. They rely on vision encoders that convert images into structured representations tailored for language models. The encoder identifies objects, textures, spatial relationships, and visual patterns. The LLM then combines this representation with the text query to build a reasoning chain.

Coordination or refinement

Two main mechanisms exist for complex visual scenarios: coordination, where an LLM integrates outputs from multiple vision models to cross-check interpretations; and refinement, where the LLM iteratively improves image descriptions through feedback loops that identify missing information. Both address limitations where single models fail to analyze complex scenarios.

In-context learning for multimodal reasoning

Some frameworks retrieve similar examples from training data, providing the model with templates for interpreting visual inputs. These demonstrations help the model apply learned reasoning patterns to new problems.

Producing the final explanation

The LLM produces an answer supported by a reasoning process, explaining how it interpreted the image, which visual elements it relied on, and the logical connections it made.

Chain-of-Thought reasoning in visual tasks

Chain-of-Thought (CoT) reasoning has emerged as an important approach in visual reasoning. Instead of analyzing an image all at once, models now break down visual problems into smaller, sequential steps, similar to how humans solve complex problems by thinking through them step by step.

Visual CoT enables models to dynamically adjust focus across different spatial regions of an image, addressing a key limitation where models previously relied on fixed-granularity image processing. For example, when analyzing a complex chart, the model might first identify the axes, then examine individual data points, and finally compare trends, rather than trying to understand everything simultaneously.

This approach integrates reinforcement learning and imitation learning to align models more closely with human reasoning patterns. This represents a fundamental shift from passive pattern recognition to active visual problem-solving, where models actively explore and reason about what they see. 4

Business applications of visual reasoning in LLMs

LLMs with visual capabilities can support multiple business scenarios. These applications depend on the model’s ability to analyze images, link them with text data, and produce reliable insights.

Document and content analysis

Businesses handle diagrams, engineering drawings, scientific journal figures, and various forms of visual data. A visual reasoning model can:

- Detect missing or incorrect elements.

- Identify objects or signs in the lower part or corners of the diagrams.

- Connect text and image segments for quality checks.

- Extract structured information for further deployment or reporting.

For example, Intuit integrated Google Cloud’s Doc AI and Gemini models to autofill tax returns across common U.S. tax forms, improving both speed and accuracy in document processing.5

Quality inspection and operations

In manufacturing and logistics, models can inspect products or packages. Visual reasoning helps detect defects, misalignments, or unusual patterns. The model can compare images against a reference and generate an explanation of what changed or what is missing.

Intel, for instance, uses AI vision inspection systems that save $2 million annually, with manufacturers typically achieving ROI within 6-12 months through reduced scrap and fewer customer returns. 6

Retail and eCommerce

Models analyze product images, identify key attributes, and match them to catalog data. Visual search capabilities let customers upload images to find similar products using computer vision, while AI-powered size recommendation engines have reduced return rates by 20-30%. These systems also detect inconsistencies between product descriptions and images.7

Security and monitoring

Visual reasoning supports video and image inspection tasks by analyzing frame sequences and detecting unusual patterns. Cambridge Industries implemented an AI-powered safety system for construction sites that reduced emergency repair costs by almost 50%. 8

Marketing and user experience

Visual reasoning helps teams understand how users interact with digital content. A model can evaluate screenshots or creatives and provide insights about layout, object placement, and potential issues. This is especially relevant when assessing different categories of visual assets.

For example Comeen uses Gemini AI to generate multilingual subtitles for workplace videos in 40 languages with one click, eliminating the multi-day, multi-vendor process that previously made content obsolete before publication. 9

Comparative landscape: major players and their approaches

Chance AI

Chance AI is among the first commercial tools built around vision-first understanding. Its visual reasoning system analyzes images through cultural, historical, functional, and aesthetic lenses. Instead of assigning simple labels, it delivers structured insights that explain why an object, figure, or scene matters, such as the artwork’s style, symbolism, and historical context, alongside its subject.

The design prioritizes user experience by enabling meaning-driven exploration through images without typed queries. This moves beyond traditional computer vision toward interpretation, storytelling, and human-like explanation, making it especially relevant for creative industries, education, and tourism, where context adds value beyond recognition.10

Meta AI

Meta’s UniBench framework introduced a unified approach to evaluating visual reasoning by combining over fifty benchmarks for spatial understanding, compositional reasoning, and counting. Testing nearly sixty vision-language models, Meta found that scaling data and model size improves perception but not reasoning, with even advanced models failing at simple tasks like digit recognition and object counting.

These findings changed how visual reasoning progress is measured, highlighting the need for higher-quality data, targeted objectives, and structured learning rather than relying solely on larger models. For businesses, UniBench offers a transparent way to compare reasoning performance across multimodal tasks before deployment.11

Figure 6: The graph shows the median performance of 59 VLMs on 53 benchmarks, revealing that, despite progress, many models still perform near-chance level, particularly on tasks like Winoground, iNaturalist, DSPR, and others (blue: zero-shot median; grey: chance level).12

OpenAI

OpenAI advanced visual reasoning with the o3 and o4-mini models, which can think with images by integrating image manipulation into their reasoning. During analysis, they zoom, crop, or rotate images to focus on relevant details, mirroring how humans adjust visual attention when interpreting diagrams or drawings.

Tested across multimodal benchmarks such as chart interpretation, visual problem-solving, and mathematical reasoning, the models showed clear gains in accuracy and contextual understanding. However, results also exposed limitations, including inconsistent reasoning and occasional perceptual errors, underscoring the ongoing challenge of reliability in visual reasoning systems.

Figure 7: The graph shows the results of all models evaluated under high “reasoning effort” settings.13

Academic and open research efforts

VisuLogic: A Benchmark for Evaluating Visual Reasoning in Multi-modal Large Language Models

This paper introduces VisuLogic, a benchmark for evaluating the performance of multimodal models on visual reasoning tasks. It combines over fifty datasets covering various types of reasoning, including spatial relations, compositional logic, and object counting.

The authors analyze dozens of existing models and find that increasing size or data scale improves image recognition but not reasoning. Models often detect patterns without understanding relationships among objects. The paper emphasizes that reasoning-specific training, better data quality, and detailed evaluation are essential for meaningful progress.

VisuLogic offers a unified framework that helps researchers and enterprises analyze reasoning capabilities rather than relying solely on perception metrics, making it a valuable resource for assessing multimodal reasoning systems.14

Explain Before You Answer: A Survey on Compositional Visual Reasoning

This survey reviews current approaches to compositional visual reasoning, focusing on how models combine visual and textual cues to reach a correct answer. It identifies weaknesses in existing methods that rely on recognition rather than structured reasoning.

The authors propose training models to explain before answering, ensuring that each reasoning process is transparent and interpretable. They discuss techniques for aligning visual and linguistic representations so that models can better understand diagrams, figures, and object associations.

The paper concludes that aligned and explainable reasoning enhances reliability and interpretability in multimodal tasks. It highlights that the future of visual reasoning research depends on integrating explanation-based learning into model design.15

Challenges in LLM visual reasoning abilities

Progress in visual reasoning also brings technical and ethical challenges that need to be considered.

Reliability remains a key concern. As seen in our benchmark, models struggle with densely packed visualizations, failing at bar-to-year mapping and relative height perception in complex charts, leading to systematic errors in trend identification. Even advanced models fail at simple tasks like digit recognition and object counting, and scaling data improves perception but not reasoning.

Bias and interpretation issues are widespread. Visual reasoning models learn and reflect biases present in their training data when interpreting images. Models reflect cultural assumptions and stereotypes from training data, including gender, racial, age, and disability biases. For example, when predicting the professions of people in an image or interpreting scenarios, these biases can distort results.

Explainability is critical for trust. Models should explain their reasoning process transparently, especially in high-stakes applications like healthcare, hiring, and criminal justice where biased outputs cause harm.

Benchmark methodology

All models were evaluated via OpenRouter API with standardized parameters: temperature set to 0.8 and max tokens parameter was not set to avoid limiting reasoning capabilities. Models were instructed to respond with only a single letter (A-E) without explanation, though some models still provided detailed reasoning, which we parsed to extract final answers. Evaluation ran in parallel across all models simultaneously. Each question was run 5 times to ensure consistent and reliable results.

The benchmark consisted of 200 questions split into two categories: Chart Understanding (100 questions) covering bar charts, line graphs, scatter plots, and complex data visualizations, and Visual Logic (10 questions) testing pattern recognition, spatial reasoning, and mathematical visual logic. All questions were presented in multiple-choice format with five options (A-E), requiring models to analyze images and select the correct answer.

Questions:

1. Chart understanding We evaluated models on their ability to extract, interpret, and analyze information from various data visualizations:

- Bar charts: Horizontal and vertical configurations, stacked and grouped formats

- Line graphs: Single and multi-series trends, time-series data

- Scatter plots: Correlation analysis, pattern identification with labeled axes

- Pie charts: Percentage distributions and proportional reasoning

- Complex visualizations: Combination charts, dual-axis graphs, and multi-panel displays

2. Visual logic We assessed abstract reasoning and spatial intelligence through:

- Pattern recognition: Identifying sequences and completing visual patterns

- Spatial reasoning: 3D visualization, cube nets, and geometric transformations

- Mathematical logic: Numerical patterns, algebraic reasoning, and combinatorics

- Abstract thinking: Symbol manipulation, logical deduction, and rule inference

Question format

- Answer format: Multiple choice (A, B, C, D, E)

Be the first to comment

Your email address will not be published. All fields are required.